On the building of efficient Fourier Convolutional Neural Networks

Keywords:

CNN, convolution theorem, Fourier CNN, linear transform layerAbstract

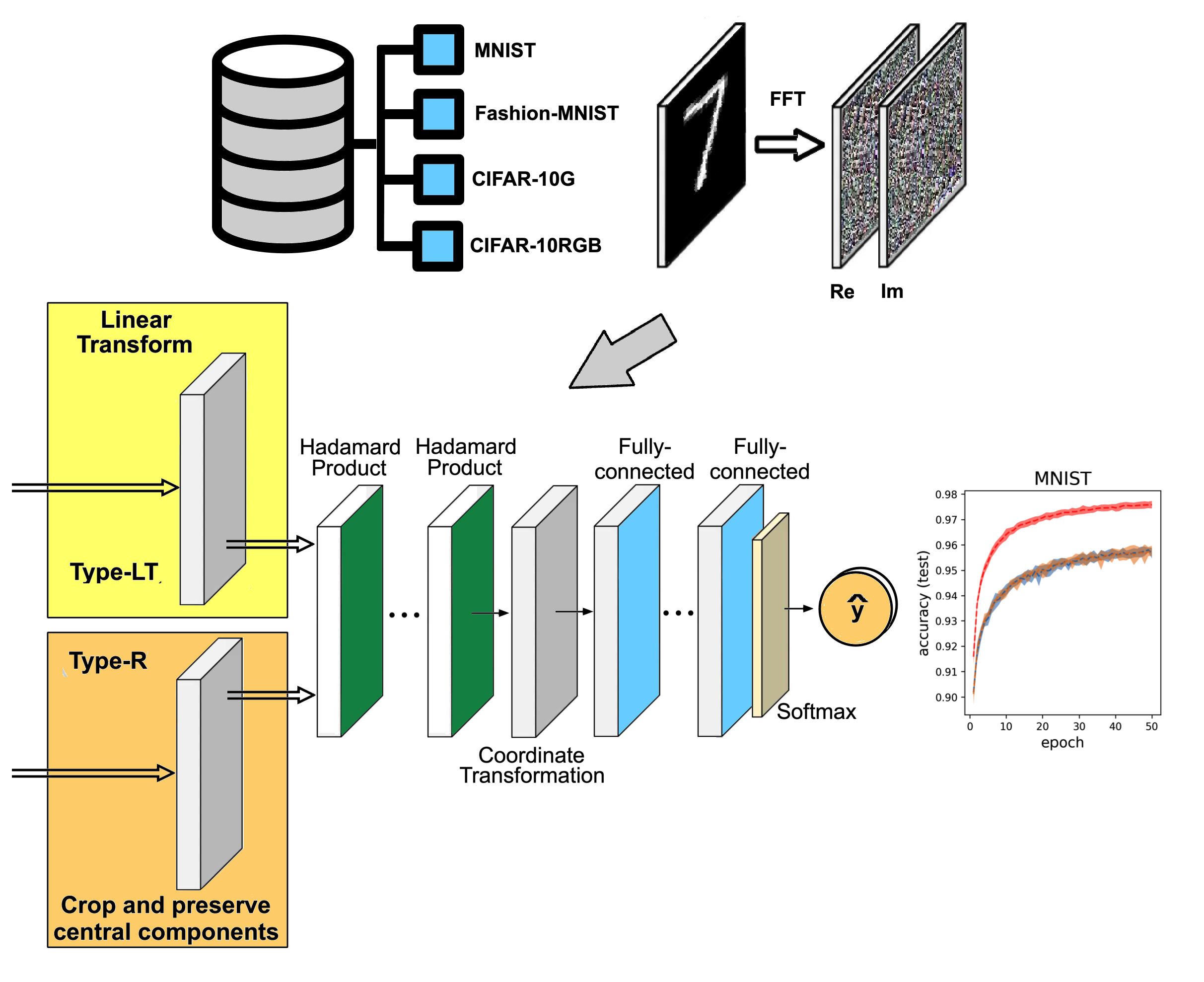

In computer vision tasks, Convolutional Neural Networks (CNN) have been the cornerstone to implement numerous models with outstanding results. Nevertheless, its high computational cost hinders its full potential when working with low-end devices. To overcome this drawback, various models have been proposed to take advantage of the properties of the convolution theorem to reduce calculations in CNN architectures. However, the use of the Fourier domain incurs in an increment of the number of weights of the kernels because they are defined using complex variable. In this work, we present a strategy to reduce such numbers by defining a reduced representation, which considers a limited amount of components to keep theparameter count comparable to that of a standard CNN. In deed, we introduce a novel layer, called Linear Transform, that learns to identify the most essential components of a frequency

representation, building an appropriate reduced representation. Our experiments on image classification using databases MNIST, Fashion-MNIST, CIFAR-10 and CIFAR-100 showed that our proposal contains fewer parameters and floating point opera tions, in comparison with both conventional and Fourier CNN architectures. Moreover, the Linear Transform layer produced an improvement in the accuracy in several cases. Cases where accuracy decreases are also analyzed.

Downloads

References

´´[1] K. Fukushima, “Neocognitron: A self-organizing neural network model for a mechanism of pattern recognition unaffected by shift in position,” Biological cybernetics, vol. 36, no. 4, pp. 193–202, 1980.

Y. LeCun, B. Boser, J. S. Denker, D. Henderson, R. E. Howard, W. Hubbard, and L. D. Jackel, “Backpropagation applied to handwritten zip code recognition,” Neural computation, vol. 1, no. 4, pp. 541–551, 1989, DOI:10.1162/neco.1989.1.4.541.

J. G. Proakis, Digital signal processing: principles, algorithms, and applications, 4th ed. Chennai, India: Pearson Education India, 2007, ISBN:0131873741.

J. W. Cooley and J. W. Tukey, “An algorithm for the machine calculation of complex Fourier series,” Mathematics of computation, vol. 19, no. 90, pp. 297–301, 1965, https://doi.org/10.2307/2003354.

M. Mathieu, M. Henaff, and Y. LeCun, “Fast training of convolutional networks through FFTs,” in 2nd International conference on learning representations, ICLR 2014, 2014, https://doi.org/10.48550/arXiv.1312.5851.

J. Lin, L. Ma, and Y. Yao, “A Fourier domain acceleration framework for convolutional neural networks,” Neurocomputing, vol. 364, pp. 254–268, 2019, https://doi.org/10.1016/j.neucom.2019.06.080.

O. Rippel, J. Snoek, and R. P. Adams, “Spectral representations for convolutional neural networks,” Advances in neural information processing systems, vol. 28, 2015. [Online]. Available: https://proceedings.neurips.cc/paper files/paper/2015/file/536a76f94cf7535158f66cfbd4b113b6-Paper.pdf

H. Pratt, B. Williams, F. Coenen, and Y. Zheng, “FCNN: Fourier convolutional neural networks,” in Machine Learning and Knowledge Discovery in Databases: European Conference, ECML PKDD 2017, Skopje, Macedonia, September 18–22, 2017, Proceedings, Part I 17. Springer, 2017, pp. 786–798. [Online]. Available: https: //doi.org/10.1007/978-3-319-71249-9 47

S. O. Ayat, M. Khalil-Hani, A. A.-H. Ab Rahman, and H. Abdellatef, “Spectral-based convolutional neural network without multiple spatial- frequency domain switchings,” Neurocomputing, vol. 364, pp. 152–167,

, https://doi.org/10.1016/j.neucom.2019.06.094.

Y. Han and B.-W. Hong, “Deep learning based on Fourier convolutional neural network incorporating random kernels,” Electronics, vol. 10, no. 16, p. 2004, 2021, https://doi.org/10.3390/electronics10162004.

T. Watanabe and D. F. Wolf, “Image classification in frequency domain with 2SReLU: a second harmonics superposition activa- tion function,” Applied Soft Computing, vol. 112, p. 107851, 2021, https://doi.org/10.1016/j.asoc.2021.107851.

J. Zak, A. Korzynska, A. Pater, and L. Roszkowiak, “Fourier transform layer: A proof of work in different training scenarios,” Applied Soft Computing, vol. 145, p. 110607, 2023, https://doi.org/10.1016/j.asoc.2023.110607.

D. Lima-L´opez and P. G´omez-Gil, “Butterworth cnn: an im- provement on memory use for Fourier Convolutional Neural Networks,” in 2024 IEEE International Autumn Meeting on Power, Electronics and Computing (ROPEC), vol. 8, 2024, pp. 1–6, DOI:10.1109/ROPEC62734.2024.10877077.

L. Deng, “The MNIST database of handwritten digit images for machine learning research,” IEEE Signal Processing Magazine, vol. 29, no. 6, pp. 141–142, 2012, DOI:10.1109/MSP.2012.2211477.

H. Xiao, K. Rasul, and R. Vollgraf, “Fashion-MNIST: a novel image dataset for benchmarking machine learning algorithms,” arXiv preprint arXiv:1708.07747, 2017, https://doi.org/10.48550/arXiv.1708.07747.

A. Krizhevsky, “Learning multiple layers of features from tiny images,” Technical report, 2009. [Online]. Available: https://www.cs.utoronto.ca/∼kriz/learning-features-2009-TR.pdf

——, “Learning multiple layers of features from tiny images,” University of Toronto, Tech. Rep., 2009.

R. C. Singleton, “On computing the Fast Fourier Transform,” Communications of the ACM, vol. 10, no. 10, pp. 647–654, 1967. [Online]. Available: https://dl.acm.org/doi/pdf/10.1145/363717.363771

C. Solomon and T. Breckon, Fundamentals of Digital Image Processing: A practical approach with examples in Matlab. John Wiley & Sons, 2011, ISBN:0470844736.

D. Lima-L´opez, “Clasificaci´on de im´agenes con arquitecturas ligeras de

redes neuronales en el espacio de Fourier,” Master’s thesis, Computer Science, 2024. [Online]. Available: http://inaoe.repositorioinstitucional. mx/jspui/handle/1009/2612

T. Chiheb, O. Bilaniuk, D. Serdyuk et al., “Deep complex networks,” in International Conference on Learning Representations, 2017. [Online]. Available: https://doi.org/10.48550/arXiv.1705.09792

M. Arjovsky, A. Shah, and Y. Bengio, “Unitary evolution recurrent neural networks,” in International conference on machine learning. PMLR, 2016, pp. 1120–1128. [Online]. Available: https://proceedings.

mlr.press/v48/arjovsky16.html

N. Guberman, “On complex valued convolutional neural networks,” arXiv preprint arXiv:1602.09046, 2016. [Online]. Available: https: //doi.org/10.48550/arXiv.1602.09046