Toward a new dataset: Mexican Traffic Signs ``ReWaIn-MTS for detection using deep learning

Keywords:

Traffic Signs Detection, Mexican Traffic Signs, YOLOv5 detector, YOLOv8 detector, DETR TrasformerAbstract

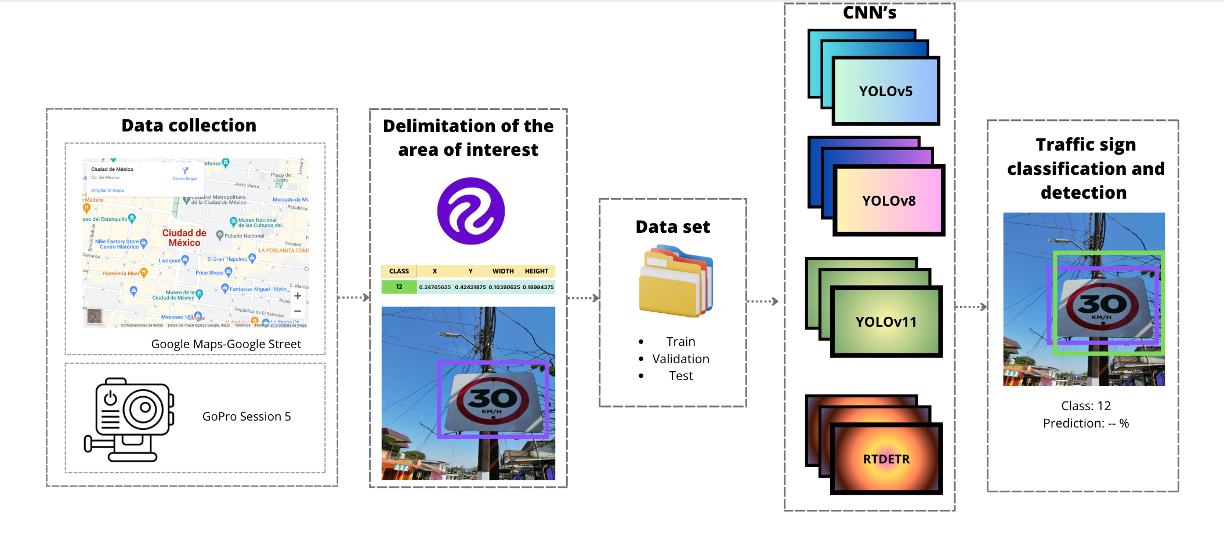

A variety of factors along the road can endanger the safety of drivers or pedestrians and lead to high-impact accidents while driving, which is why traffic signs are essential elements that provide information about the condition of the road during the trip. Traffic sign detection and classification is a research area in computer vision. Its applications are mainly in autonomous conduction or assistance driving. Convolutional neural networks (CNNs) have outstanding detection results compared to conventional methods. In this work, we employed machine learning techniques based on CNNs to categorize and detect Mexican traffic signs. A dataset focused on traffic signs was outlined for the Mexican territory within the main urban roads in eight different cities. The dataset contains 2,283 road elements divided into 37 classes for training and validation of algorithms; a novel methodology is proposed to apply data augmentation and obtain better performance in classification and detection models. The mean Average Precision (mAP) metric compares the performance in state-of-the-art detection methods, particularly YOLOv5, YOLOv8, and the Transformer DETR, obtaining better results with trained models incorporating data augmentation.

Downloads

References

A. S. Alturki, “Traffic sign detection and recognition using adaptive

threshold segmentation with fuzzy neural network classification,” in

International Symposium on Networks, Computers and Commu-

nications (ISNCC). IEEE, 2018, doi=10.1109/ISNCC.2018.8531070.,

pp. 1–7.

Secretar´ıa de Infraestructura, Comunicaciones y Transportes, “Manual

de se˜nalizaci´on vial y dispositivos de seguridad,” p. 770, 2014.

[Online]. Available: https://lc.cx/sLiS3Z

H. Wells, “The techno-fix versus the fair cop: Procedural (in) justice and

automated speed limit enforcement,” The British Journal of Criminology,

vol. 48, no. 6, pp. 798–817, 2008, doi:10.1093/bjc/azn058.

W. Zhao, S. Gong, D. Zhao, F. Liu, N. Sze, M. Quddus, and H. Huang,

“Developing a new integrated advanced driver assistance system in a

connected vehicle environment,” Expert Systems with Applications, p.

, 2023, doi:10.1016/j.eswa.2023.121733.

S. Nordhoff, J. D. Lee, S. C. Calvert, S. Berge, M. Hagenzieker, and

R. Happee, “(mis-) use of standard autopilot and full self-driving (fsd)

beta: Results from interviews with users of tesla’s fsd beta,” Frontiers in

psychology, vol. 14, p. 1101520, 2023, doi:10.3389/fpsyg.2023.1101520.

F. Almutairy, T. Alshaabi, J. Nelson, and S. Wshah, “Arts: Automotive

repository of traffic signs for the united states,” IEEE Transactions on

Intelligent Transportation Systems, vol. 22, no. 1, pp. 457–465, 2019,

doi:10.1109/TITS.2019.2958486

U. Dubey and R. K. Chaurasiya, “Efficient traffic sign recognition

using clahe-based image enhancement and resnet cnn architectures,”

International Journal of Cognitive Informatics and Natural Intelligence

(IJCINI), vol. 15, no. 4, pp. 1–19, 2021, doi:10.4018/IJCINI.295811.

X. Gao, L. Chen, K. Wang, X. Xiong, H. Wang, and Y. Li, “Improved

traffic sign detection algorithm based on faster r-cnn,” Applied Sciences,

vol. 12, no. 18, p. 8948, 2022, doi:10.3390/app12188948.

R. Lahmyed, M. E. Ansari, and Z. Kerkaou, “Automatic road sign

detection and recognition based on neural network,” Soft Computing,

pp. 1–22, 2022, doi:10.1007/s00500-021-06726-w.

R. Castruita, M. Carlos, O. O. Vergara, V. Cruz, and H. Ochoa,

“Mexican traffic sign detection and classification using deep learn-

ing,” Expert Systems with Applications, vol. 202, p. 117247, 2022,

doi:10.1016/j.eswa.2022.117247.

E. Arkin, N. Yadikar, X. Xu, A. Aysa, and K. Ubul, “A survey: Object

detection methods from cnn to transformer,” Multimedia Tools and Ap-

plications, vol. 82, no. 14, pp. 21 353–21 383, 2023, doi:10.1007/s11042-

-13801-3.

R. Girshick, J. Donahue, T. Darrell, and J. Malik, “Rich feature

hierarchies for accurate object detection and semantic segmentation,”

in Proceedings of the IEEE conference on computer vision and pattern

recognition, 2014, doi:10.1109/CVPR.2014.81, pp. 580–587.

J. Redmon, S. Divvala, R. Girshick, and A. Farhadi, “You only

look once: Unified, real-time object detection,” in Proceedings of the

IEEE conference on computer vision and pattern recognition, 2016,

doi:10.1109/CVPR.2016.91, pp. 779–788.

A. Vaswani, N. Shazeer, N. Parmar, J. Uszkoreit, L. Jones, A. N.

Gomez, Ł. Kaiser, and I. Polosukhin, “Attention is all you need,”

Advances in neural information processing systems, vol. 30, 2017,

doi:10.5555/3295222.3295349.

N. Carion, F. Massa, G. Synnaeve, N. Usunier, A. Kirillov, and

S. Zagoruyko, “End-to-end object detection with transformers,” in Euro-

pean conference on computer vision. Springer, 2020, doi:10.1007/978-

-030-58452-8 13, pp. 213–229.

Y. Zhao, W. Lv, S. Xu, J. Wei, G. Wang, Q. Dang, Y. Liu, and J. Chen,

“Detrs beat yolos on real-time object detection,” in Proceedings of the

IEEE/CVF conference on computer vision and pattern recognition, 2024,

pp. 16 965–16 974.

Y. Zhang, Y. Yuan, Y. Feng, and X. Lu, “Hierarchical and robust

convolutional neural network for very high-resolution remote sensing ob-

ject detection,” IEEE Transactions on Geoscience and Remote Sensing,

vol. 57, no. 8, pp. 5535–5548, 2019, doi:10.1109/TGRS.2019.2900302.

Z. Zhu, D. Liang, S. Zhang, X. Huang, B. Li, and S. Hu, “Traffic-

sign detection and classification in the wild,” in 2016 IEEE Con-

ference on Computer Vision and Pattern Recognition (CVPR), 2016,

doi:10.1109/CVPR.2016.232, pp. 2110–2118.

S. Houben, J. Stallkamp, J. Salmen, M. Schlipsing, and C. Igel, “Detection of traffic signs in real-world images: The german traffic sign detection benchmark,” in The 2013 International Joint Conference on Neural Networks (IJCNN). IEEE, 2013, doi:10.1109/IJCNN.2013.6706807.,pp. 1–8

Y. Liu, G. Shi, Y. Li, and Z. Zhao, “M-yolo: Traffic sign detection algorithm applicable to complex scenarios,” Symmetry, vol. 14, no. 5, pp. 1–21, 2022, doi:10.3390/sym14050952.

Z. Li, M. Chen, Y. He, L. Xie, and H. Su, “An efficient frame-work for detection and recognition of numerical traffic signs,” in ICASSP 2022-2022 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP). IEEE, 2022, doi:10.1109/ICASSP43922.2022.9747406, pp. 2235–2239.

T. Liang, H. Bao, W. Pan, and F. Pan, “Traffic sign detection via improved sparse R-CNN for autonomous vehicles,” Journal of Advanced Transportation, vol. 2022, pp. 1–16, 2022, doi:10.1155/2022/3825532.

J. Cao, C. Song, S. Peng, F. Xiao, and S. Song, “Improved traffic sign

detection and recognition algorithm for intelligent vehicles,” Sensors,

vol. 19, no. 18, p. 4021, 2019, doi:10.3390/s19184021.

A. Vennelakanti, S. Shreya, R. Rajendran, D. Sarkar, D. Muddegowda, and P. Hanagal, “Traffic sign detection and recognition using a cnn ensemble,” in 2019 IEEE international conference on consumer electronics (ICCE). IEEE, 2019, doi:10.1109/ICCE.2019.8662019., pp. 1–4.

A. Barodi, A. Bajit, A. Zemmouri, M. Benbrahim, and A. Tamtaoui, “Improved deep learning performance for real-time traffic sign detection and recognition applicable to intelligent transportation systems,” International Journal of Advanced Computer Science and Applications, vol. 13, no. 5, 2022, doi:10.14569/ijacsa.2022.0130582.

N. Youssouf, “Traffic sign detection and recognition with faster-RCNN and YOLOV4,” Available at SSRN 4012734, 2022, doi:10.1016/j.heliyon.2022.e11792.

S. Ramkumar, G. Ramanathan, and K. Sridhar, “Traffic sign detection and recognition using cnn,” ECS Transactions, vol. 107, no. 1, p. 17447, 2022, doi:10.1149/10701.17447ecst.

Y. Yao, L. Han, C. Du, X. Xu, and X. Jiang, “Traffic sign detection algorithm based on improved yolov4-tiny,” Signal Processing: Image Communication, vol. 107, p. 116783, 2022, doi:10.1016/j.image.2022.116783.

U. Kamal, T. I. Tonmoy, S. Das, and M. K. Hasan, “Automatic traffic sign detection and recognition using segu-net and a modified tversky loss function with l1-constraint,” IEEE Transactions on Intelligent Transportation Systems, vol. 21, no. 4, pp. 1467–1479, 2019, doi:10.1109/TITS.2019.2911727.

E. G¨uney and C. BayiLlmis¸, “An implementation of traffic signs an road objects detection using faster r-cnn,” Sakarya University Journal of Computer and Information Sciences, vol. 5, no. 2, pp. 216–224, 2022, doi:10.35377/saucis.05.02.1073355.

D. Tabernik and D. Skoˇcaj, “Deep learning for large-scale traffic-sign detection and recognition,” IEEE transactions on intelligent transportation systems, vol. 21, no. 4, pp. 1427–1440, 2019, doi:10.1109/TITS.2019.2913588.

I. Siniosoglou, P. Sarigiannidis, Y. Spyridis, A. Khadka, G. Efstathopoulos, and T. Lagkas, “Synthetic traffic signs dataset for traffic sign detection & recognition in distributed smart systems,” in 2021 17th

International Conference on Distributed Computing in Sensor Systems (DCOSS). IEEE, 2021, doi:10.1109/DCOSS52077.2021.00056, pp. 302–308.

H. Huang, Q. Liang, D. Luo, D. H. Lee et al., “Attention-enhanced one-stage algorithm for traffic sign detection and recognition,” Journal of Sensors, vol. 2022, 2022, doi:10.1155/2022/3705256.

H. Wang, “Traffic sign recognition with vision transformers,” in Proceedings of the 6th International Conference on Information System and Data Mining, 2022, doi:10.1145/3546157.3546166, pp. 55–61.

B. Dwyer, J. Nelson, J. Solawetz, and et. al., “Roboflow (Version 1.0),” 2022, accessed: 2023-10-13. [Online]. Available: https://roboflow.com/annotate

G. Jocher, A. Stoken, J. Borovec, NanoCode012, ChristopherSTAN, L. Changyu, Laughing, tkianai, A. Hogan, lorenzomammana, yxNONG, AlexWang1900, L. Diaconu, Marc, wanghaoyang0106, ml5ah, Doug, F. Ingham, Frederik, Guilhen, Hatovix, J. Poznanski, J. Fang, L. Yu, changyu98, M. Wang, N. Gupta, O. Akhtar, PetrDvoracek, and P. Rai, “ultralytics/yolov5: v3.1 - Bug Fixes and Performance Improvements,”

software, Oct. 2020,doi:10.5281/zenodo.4154370.

J. Terven, D.-M. C´ordova-Esparza, and J.-A. Romero-Gonz´alez, “A com- prehensive review of yolo architectures in computer vision: From yolov1 to yolov8 and yolo-nas,” Machine Learning and Knowledge Extraction, vol. 5, no. 4, pp. 1680–1716, 2023, doi:10.3390/make5040083.

D. Reis, J. Kupec, J. Hong, and A. Daoudi, “Real-time flying object detection with yolov8,” arXiv preprint arXiv:2305.09972, 2023, doi:10.48550/arXiv.2305.09972.

R. Khanam and M. Hussain, “Yolov11: An overview of the key architectural enhancements,” arXiv preprint arXiv:2410.17725, 2024.

P. Henderson and V. Ferrari, “End-to-end training of object class detectors for mean average precision,” in Computer Vision–ACCV 2016: 13th Asian Conference on Computer Vision, Taipei, Taiwan, November 20-24, 2016, Revised Selected Papers, Part V 13. Springer, 2017, doi:

1007/978-3-319-54193-8 13, pp. 198–213.

G. Jocher, “Ultralytics yolov5,” 2020. [Online]. Available: https: //github.com/ultralytics/yolov5

G. Jocher, A. Chaurasia, and J. Qiu, “Ultralytics yolov8,” 2023. [Online]. Available: https://github.com/ultralytics/ultralytics