Augmentative and Alternative Communication Using Eye Tracking and Word Recommendation Using Language Models

Keywords:

Augmentative and Alternative Communication, Eye tracking, Virtual keyboard, Artificial neural network, Language Models, AccessibilityAbstract

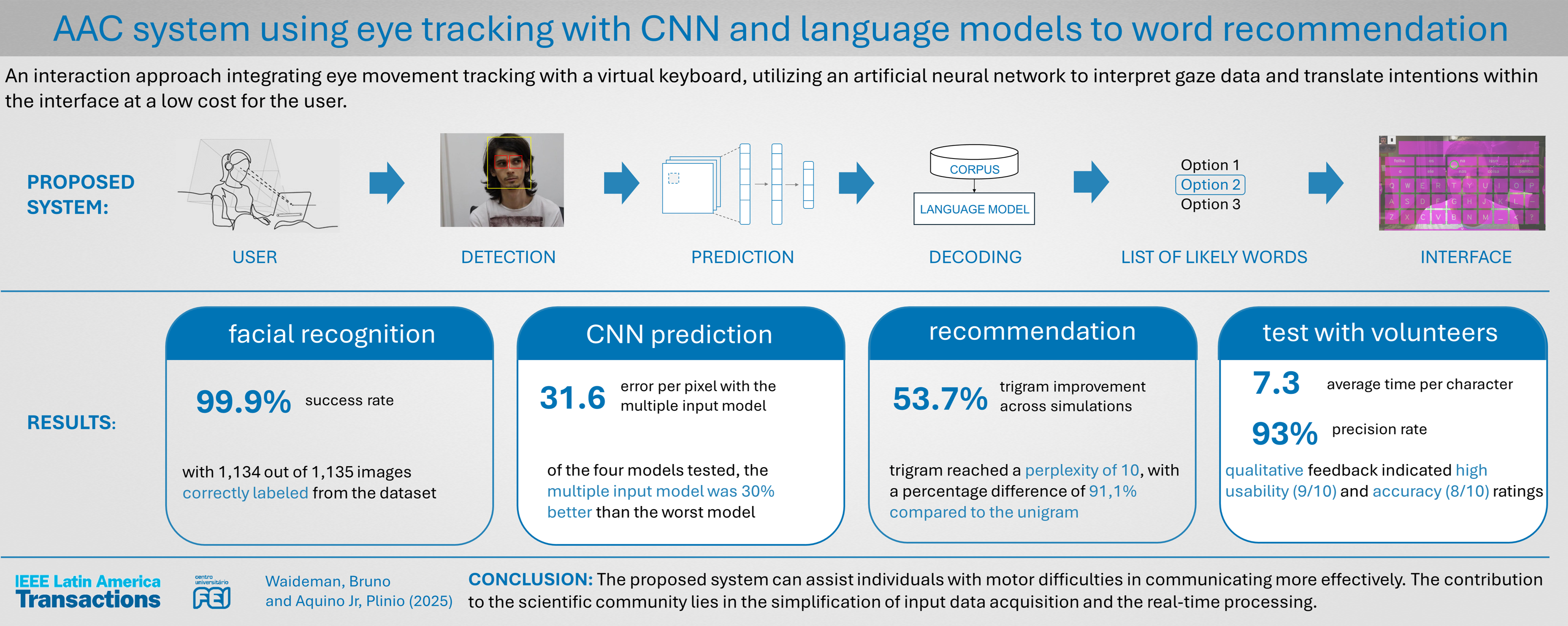

The production, storage, and dissemination of information have evolved from ancient communication methods to modern digital technologies, with digital media playing a key role in connecting individuals. While keyboards are common tools for interaction, they present challenges for individuals with motor impairments. Augmentative and Alternative Communication (AAC) techniques, including gesture input, voice commands, and sensor-based systems, have emerged to address these limitations. Eye tracking, used in accessibility systems, offers both opportunities and challenges, such as visual fatigue and inaccuracies that lead to slower typing. To address these challenges, this study proposes an interaction approach integrating eye movement tracking with a virtual keyboard, utilizing an artificial neural network to interpret gaze data and translate intentions within the interface at a low cost for the user. Additionally, a Language Model (LM) aids in predicting next-word suggestions. This research will assess the impact of these technologies on typing speed, error rate, and linguistic predictability, contributing both scientifically and societally to the advancement of accessible communication systems.

Downloads

References

H. Wang, D. Yu, Y. Zeng, T. Zhou, W. Wang, X. Liu, Z. Pei, Y. Yu, C. Wang, Y. Deng, and A. Cheshmehzangi, “Quantifying the impacts of posture changes on office worker productivity: an exploratory study using effective computer interactions as a real-time indicator,” BMC Public Health, vol. 23, 2023. DOI: 10.1186/s12889-023-17100-w

R. A. da Silva and A. C. Paschoarelli Veiga, “Algorithm for decoding visual gestures for an assistive virtual keyboard,” IEEE Latin America Transactions, vol. 18, p. 1909–1916, Mar. 2021. DOI: 10.1109/TLA.2020.9398632

D. D. Aoife McNicholl, Hannah Casey and P. Gallagher, “The impact of assistive technology use for students with disabilities in higher education: a systematic review,” Disability and Rehabilitation: Assistive Technology, vol. 16, no. 2, pp. 130–143, 2021. DOI: 10.1080/17483107.2019.1642395

F. L. Silva and A. R. C. Serra, “Tecnologia assistiva: recursos de comunicação aumentativa e alternativa na proposta de interação e aprendizagem dos alunos com autismo,” Revista Tempos e Espaços em Educação, 2023. DOI: 10.20952/revtee.v16i35.18610

L. F. B. Loja et al., “Tecnologia assistiva: um teclado virtual evolutivo para aplicações em sistemas de comunicação alternativa e aumentativa,” 2015. DOI: 10.14393/ufu.te.2015.153

A. C. A. Montenegro, L. K. S. de Melo Silva, R. C. de Sá Bonotto, R. A. Lima, and I. A. de Lavor Navarro Xavier, “Uso de sistema robusto de comunicação alternativa no transtorno do espectro do autismo: relato de caso,” Revista CEFAC, 2022. DOI: 10.1590/1982-0216/202224211421s

C. M. Togashi and C. C. de Figueiredo Walter, “As contribuições do uso da comunicação alternativa no processo de inclusão escolar de um aluno com transtorno do espectro do autismo,” 2016. DOI: 10.1590/S1413-65382216000300004

R. Bonotto, Y. Corrêa, E. Cardoso, and D. S. Martins, “Oportunidades de aprendizagem com apoio da comunicação aumentativa e alternativa em tempos de covid-19,” Revista Ibero-Americana de Estudos em Educação, 2020. DOI: 10.21723/riaee.v15i4.13945

G. K. dos Santos Silva, “A comunicação alternativa como aporte para inclusão,” Revista Ibero-Americana de Humanidades, Ciências e Educação, 2023. DOI: 10.51891/rease.v9i4.9253

L. dos Santos Batista, K. M. O. Kumada, and P. Benitez, “Rastreamento ocular e a educação especial inclusiva: uma revisão sistemática,” Olhar de Professor, 2023. DOI: 10.5212/OlharProfr.v.26.19672.002

M. Nazar, M. M. Alam, E. Yafi, and M. M. Su’ud, “A systematic review of human–computer interaction and explainable artificial intelligence in healthcare with artificial intelligence techniques,” IEEE Access, vol. 9, pp. 153316–153348, 2021. DOI: 10.1109/ACCESS.2021.3127881

D. Freitas, S. Rodrigues, and J. Ribeiro, “Interfaces de acesso ao computador para pessoas com limitações motoras: um estado da arte,” Tecnologias assistivas: formação, experiências e práticas, pp. 156–175, 2024. DOI: 10.52695/978-65-5456-050-4.8

J. Hori, K. Sakano, and Y. Saitoh, “Development of communication supporting device controlled by eye movements and voluntary eye blink,” in The 26th Annual International Conference of the IEEE Engineering in Medicine and Biology Society, vol. 2, pp. 4302–4305, 2004. DOI: 10.1109/IEMBS.2004.1404198

J. Wobbrock, J. Rubinstein, M. Sawyer, and A. Duchowski, “Not typing but writing: Eye-based text entry using letter-like gestures,” 01 2007. DOI: 10.1145/1344471.1344475

A. Bulling, D. Roggen, and G. Tröster, “It’s in your eyes: Towards context-awareness and mobile hci using wearable eog goggles,” vol. 344, pp. 84–93, 01 2008. DOI: 10.1145/1409635.1409647

N. Bee, Nikolaus, E. Andre, and Elisabeth, “Writing with your eye: A dwell time free writing system adapted to the nature of human eye gaze,” 06 2008. DOI: 10.1007/978-3-540-69369-7_13

P. Majaranta, U.-K. Ahola, and O. Špakov, “Fast gaze typing with an adjustable dwell time,” pp. 357 360, 04 2009. DOI: 10.1145/1518701.1518758

W. Tangsuksant, C. Aekmunkhongpaisal, P. Cambua, T. Charoenpong, and T. Chanwimalueang, “Directional eye movement detection system for virtual keyboard controller,” in The 5th 2012 Biomedical Engineering International Conference, pp. 1–5, 2012. DOI: 10.1109/BME-iCon.2012.6465432

V. I. Saraswati, R. Sigit, and T. Harsono, “Eye gaze system to operate virtual keyboard,” in 2016 International Electronics Symposium (IES), pp. 175–179, 2016. DOI: 10.1109/ELECSYM.2016.7860997

H. Cecotti, Y. K. Meena, and G. Prasad, “A multimodal virtual keyboard using eye-tracking and hand gesture detection,” in 2018 40th Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC), pp. 3330–3333, 2018. DOI: 10.1109/EMBC.2018.8512909

S. Tantisatirapong and M. Phothisonothai, “Design of user-friendly virtual thai keyboard based on eye-tracking controlled system,” in 2018 18th International Symposium on Communications and Information Technologies (ISCIT), pp. 359–362, 2018. DOI: 10.1109/ISCIT.2018.8587965.

M. I. Rusydi, A. Anandika, R. Adnan, K. Matsuhita, and M. Sasaki, “Adaptive symmetrical virtual keyboard based on eog signal,” in 2019 4th Asia-Pacific Conference on Intelligent Robot Systems (ACIRS), pp. 22–26, 2019. DOI: 10.1109/ACIRS.2019.8935956

A. Z. Attiah and E. F. Khairullah, “Eye-blink detection system for virtual keyboard,” in 2021 National Computing Colleges Conference (NCCC), pp. 1–6, 2021. DOI: 10.1109/NCCC49330.2021.9428797

A. Anandika, M. I. Rusydi, P. P. Utami, R. Hadelina, and M. Sasaki, “Hand gesture to control virtual keyboard using neural network,” JITCE (Journal of Information Technology and Computer Engineering), 2023. DOI: 10.25077/jitce.7.01.40-48.2023

M. Shaima, N. Nabi, M. N. U. Rana, E. Ahmed, M. I. Tusher, M. Hasan, Mukti, and Q. Saad-Ul Mosaher, “Elon musk’s neuralink brain chip: A review on ‘brain-reading’ device,” Journal of Computer Science and Technology Studies, 2024. DOI: 10.32996/jcsts.

H. Drewes, “Eye gaze tracking for human computer interaction,” 03 2010. DOI: 10.5282/edoc.11591.

K. H. et al., “Eye tracking: empirical foundations for a minimal reporting guideline,” Behavior Research Methods, vol. 55, pp. 364 – 416, 2022. DOI: 10.3758/s13428-021-01762-8

D. E. King, “Max-margin object detection,” ArXiv, vol. abs/1502.00046, 2015. DOI: 10.48550/arXiv.1502.00046

D. da Silva Lima, Avaliação da função visual infantil a partir de solução automatizada de rastreamento ocular baseada em vídeo. PhD thesis, Instituto de Psicologia, University of São Paulo, 2024. DOI: 10.11606/T.47.2024.tde-13062024-113449

I. Goodfellow, Y. Bengio, and A. Courville, Deep Learning. Adaptive computation and machine learning, MIT Press, 2016. DOI: 10.1007/s10710-017-9314-z

J. Maher, The Future Was Here: The Commodore Amiga. Cambridge, MA: MIT Press, 2012. DOI: 10.7551/mitpress/9022.001.0001

N. Garay-Vitoria and J. Abascal, “Text prediction systems: A survey,” Universal Access in the Information Society, vol. 4, no. 3, pp. 188 203, 2006. DOI: 10.1007/s10209-005-0005-9

L. Florea, C. Florea, R. Vranceanu, and C. Vertan, “Can your eyes tell me how you think - a gaze directed estimation of the mental activity,” pp. 60.1–60.11, 01 2013. DOI: 10.5244/C.27.60

L. Li, K. Jamieson, A. Rostamizadeh, E. Gonina, J. Ben-tzur, M. Hardt, B. Recht, and A. Talwalkar, “A system for massively parallel hyperparameter tuning,” in Proceedings of Machine Learning and Systems (I. Dhillon, D. Papailiopoulos, and V. Sze, eds.), vol. 2, pp. 230–246, 2020. DOI: 10.48550/arXiv.1810.05934

J. Yacim and D. Boshoff, “Impact of artificial neural networks training algorithms on accurate prediction of property values,” Journal of Real Estate Research, vol. 40, pp. 375–418, 11 2018. DOI: 10.1080/10835547.2018.12091505