An Enhanced Fire Perception Framework for Firefighting Robots: ECA-BiFPN Boosted YOLO-EB with Multi-Modal Fusion

Keywords:

Firefighting robots, Flame segmentation, YOLO-EB, Binocular vision, Spectral fusion, Multi-modal perceptionAbstract

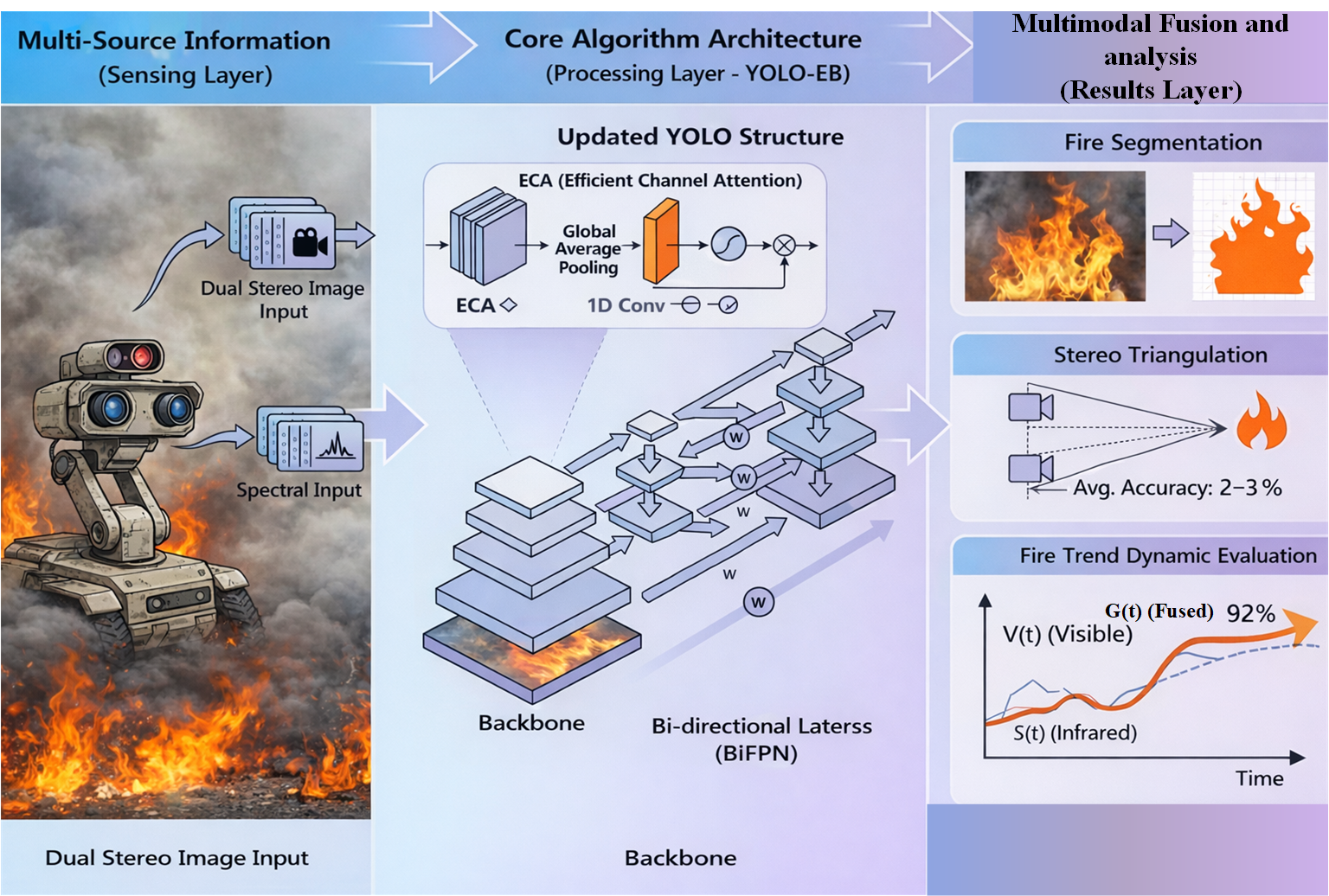

To address the challenges posed by complex fire environments to flame perception and situational assessment in firefighting robots, this paper proposes a multimodal perception method integrating flame segmentation, spatial localization, and situational awareness. Based on an enhanced YOLO-EB segmentation network, this approach combines stereo vision and spectral information to achieve precise flame detection and localization. The YOLO-EB network incorporates an Efficient Channel Attention (ECA) mechanism and a Bidirectional Feature Pyramid Network (BiFPN) to enhance its representational capabilities, effectively balancing accuracy and real-time performance. Ablation experiments demonstrate that the synergistic effect of these modules significantly improves model performance: compared to the YOLOv8-seg baseline, the proposed model achieves a flame recall of 0.680 and a mean average precision (mAP) of 0.821. Leveraging high-quality segmentation masks, a stereo-spectral fusion perception framework is constructed, achieving precise flame localization at medium distances with an average error of 2–3%. Through dynamic fusion of spectral and visible-light features, the system attains an average situational awareness accuracy of 92.45%, significantly outperforming single-modal methods. Experimental results confirm that this integrated approach provides firefighting robots with stable and reliable capabilities for early fire detection, accurate localization, and dynamic assessment, demonstrating strong potential for practical deployment.

Downloads

References

S. Yang, W. Ding, and Y. Wang, “Real-time flame detection and localization for firefighting robots,” Robot. Auton. Syst. 2025, 194, 105147. doi.org/10.1016/j.robot.2025.105147

J. Zhang, L. Liu, and H. Wang, “YOLOGX: An improved forest fire detection algorithm based on deep learning,” Front. Environ. Sci. 2024, 12, 1486212. doi.org/10.3389/fenvs.2024.1486212

H. Deng, D. Li, S. Cai et al. “Spatio-temporal dynamics of forest fire occurrence in Yunnan, China from 2001 to 2021 based on MODIS,” npj Nat. Hazards 2025, 2, 52. doi.org/10.1038/s44304-025-00102-6

Z. Dong, C. Zheng, F. Zhao, G. Wang, Y. Tian, and H. Li, "A deep learning framework: Predicting fire radiative power from the combination of polar-orbiting and geostationary satellite data during wildfire spread," IEEE J. Sel. Top. Appl. Earth Observ. Remote Sens., vol. 17, pp. 10827–10841, May 2024, doi: 10.1109/JSTARS.2024.3403146.

G. Y. Wang “Fire Source Range Localization Based on the Dynamic Optimization Method for Large-Space Buildings,” IEEE Sens. 2018, 18(6), 1954. doi.org/10.3390/s18061954.

P. Vorwerk, “Classification in Early Fire Detection Using Multi-Sensor Nodes—A Transfer Learning Approach” IEEE Sens. 2024.24(5), 1428 . DOI:10.3390/s24051428.

F. Khan, Z. Xu, J. Sun, F. M. Khan, A. Ahmed, and Y. Zhao, “Recent Advances in Sensors for Fire Detection,” Sens. 2022, 22(9), 3310. DOI10.3390/s22093310.

X. Deng, “An Indoor Fire Detection Method Based on Multi-Sensor Fusion and a Lightweight Convolutional Neural Network,” Sens. 2023, 23(24), 9689. DOI10.3390/s23249689.

T. Ren, M. F. Modest et al., “Machine learning applied to retrieval of temperature and concentration distributions from infrared emission measurements,” Appl. Energy. 2019, 252, 113448. DOI:10.1016/j.apenergy.2019.113448.

C. Magro, O. C. Goncalves et al., “Remote sensing of volatile organic compounds release during prescribed fires in pine forests using open-path Fourier transform infra-red spectroscopy,” Int. J. Wildland Fire. 2024, 33(4), WF23019. DOI10.1071/WF23019.

K. L. Minatre, M. M. Arienzo et al., “Charcoal analysis for temperature reconstruction with infrared spectroscopy,” Front. Earth Sci. 2024, 135, 1354080. DOI:10.3389/feart.2024.1354080.

D. Sper, et al., “Wildfire Detection Using Convolutional Neural Networks and PRISMA Hyperspectral Imagery: A Spatial-Spectral Analysis,” Remote Sens. 2023, 15(19), 4855. DOI10.3390/rs15194855.

K. Thangavel, et al., “Autonomous Satellite Wildfire Detection Using Hyperspectral Imagery and Neural Networks: A Case Study on Australian Wildfire,” Remote Sens. 2023, 15(3), 720. DOI10.3390/rs15030720.

W. N. Sun, et al, “A Study on Flame Detection Method Combining Visible Light and Thermal Infrared Multimodal Images,” Fire Technol. 2025, 61, 2167–2188. DOI:10.1007/s10694-024-01676-9.

D. Spiller, et al, “Wildfires Temperature Estimation by Complementary Use of Hyperspectral PRISMA and Thermal,” JGR: Biogeosciences. 2022, 127(12) DOI10.1029/2022JG007055.

Ronneberger, O., Fischer, P., & Brox, T. "U-Net: Convolutional networks for biomedical image segmentation." In Proc. IEEE Int. Conf. Med. Image Comput. Comput.-Assisted Interv., 2015, pp. 234-241, DOI:10.1007/978-3-662-54345-0_3.

Feng, H., Qiu, J., Wen, L., et al. "U3UNet: An accurate and reliable segmentation model for forest fire monitoring based on UAV vision." Neural Networks, vol. 175, pp. 262-275, 2025, doi: 10.1016/j.neunet.2025.107207.

Gonçalves, P., et al. "Fire segmentation using a DeepLabv3+ architecture." In Proc. 2025 IEEE Int. Conf. Syst., Man, Cybern. (SMC), 2025. DOI:10.1117/12.2573902.

Z. Deng, S. Hu, S. Yin, Y. Wang, A. Basu, and I. Cheng, "Multi-step implicit Adams predictor-corrector network for fire detection," IET Image Processing, vol. 16, no. 9, pp. 2338–2350, 2022, doi: 10.1049/ipr2.12491.

T. Li, H. Zhu, C. Hu, and J. Zhang, "An attention-based prototypical network for forest fire smoke few-shot detection," Journal of Forestry Research, vol. 33, no. 5, pp. 1493–1504, 2022, doi: 10.1007/s11676-022-01457-6.

S. Li, Q. Yan, and P. Liu, "An efficient fire detection method based on multiscale feature extraction, implicit deep supervision and channel attention mechanism," IEEE Transactions on Image Processing, vol. 29, pp. 8467–8475, 2020, doi: 10.1109/TIP.2020.3016431.

Du, H., Li, Q., Guan, Z., Zhang, H., and Liu, Y., “An improved lightweight YOLOv8 network for early small flame target detection,” Processes, vol. 12, no. 9, p. 1978, 2024, doi: 10.3390/pr12091978.

Li, S., et al. "Efficient Channel Attention for Flame Feature Enhancement in Fire Detection." IEEE Trans. Ind. Electron., vol. 70, no. 12, pp. 12764-12774, 2023, doi.org/10.3390/fire8020038 /TIE.2023.3287654.

Meng, D., & Wu, X. "YOLOv5s-RBC: A Lightweight Fire Detection Algorithm with BiFPN for Multi-Scale Feature Fusion." SSRN Electron. J., vol. 34, pp. 1-12, 2025. doi: 10.18280/ts.380437

F. A. Castillo, L. Arias, and J. Cifuentes, “Biomass flame spectroscopy technique to identify wood species through spectral emission during combustion processes,” Measurement. 2025, 202, 115581, doi.org/10.1016/j.measurement.2024.115581.

Cao, X., Su, Y., Geng, X., et al. "YOLO-SF: YOLO for Fire Segmentation Detection." IEEE Access, vol. 11, pp. 111079-111092, 2023, doi: 10.1109/access.2023.3322143.