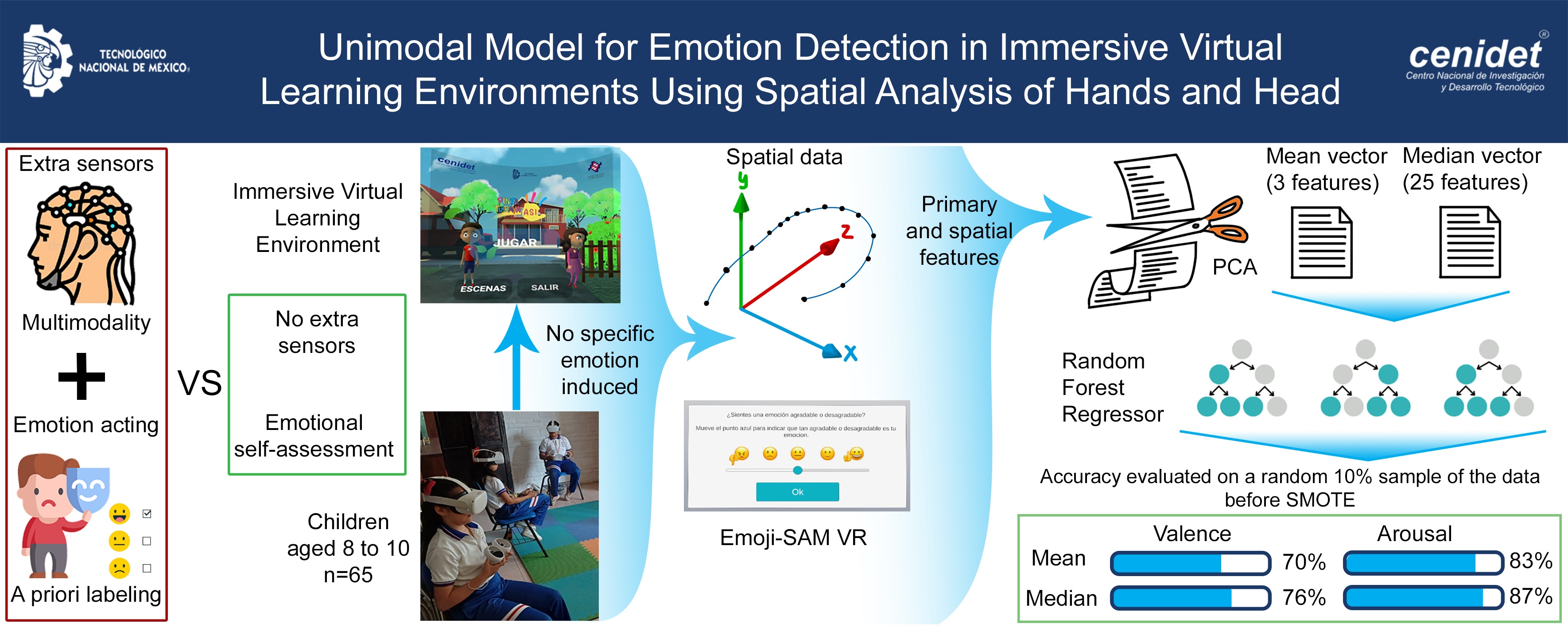

Unimodal Model for Emotion Detection in Immersive Virtual Learning Environments Using Spatial Analysis of Hands and Head

Keywords:

nonverbal behavior, behavioral measurement, emotion recognition, feature selection, body motion analysis, random forest, Virtual Reality, affecting computingAbstract

This study introduces a unimodal model for emotion detection in Virtual Reality (VR) environments that depends only on the spatial information from the user's head and hands during interactions within an Immersive Virtual Learning Environment (IVLE). The goal is to eliminate the need for additional sensors, offering an emotional recognition method that can be scaled for multiuser settings. Data on rotation and position from VR devices were collected along with self-reported valence and arousal ratings from 65 participants. Primary and spatial features were extracted, generating mean and median vectors. Random forest regression techniques were then used to predict valence and arousal values in SMOTE augmented data. A paired random pre-augmentation data was used to further test the models in a closer-to-final-implementation scenario. The models achieved accuracies of 70% and 76% for valence prediction using the mean and median vectors, respectively. For arousal, the accuracies were 83% (mean vector) and 87% (median vector). The findings suggest that the median-based approach improves performance, although it involves higher feature dimensionality. This model enables the non-invasive inference of a user's emotional state in VR environments, without cables or extra sensors. This advancement enhances user experience and lowers implementation costs. These results provide a foundation for integrating affective tutors in IVLEs, with potential applications in education and training involving large groups.

Downloads

References

J. Tan, J. Mao, Y. Jiang, and M. Gao, ‘The Influence of Academic Emotions on Learning Effects: A Systematic Review’, International Journal of Environmental Research and Public Health, vol. 18, no. 18, 2021, doi: 10.3390/ijerph18189678.

F. Alqahtani, S. Katsigiannis, and N. Ramzan, ‘Using Wearable Physiological Sensors for Affect-Aware Intelligent Tutoring Systems’, IEEE Sensors Journal, vol. 21, no. 3, pp. 3366–3378, 2021, doi: 10.1109/JSEN.2020.3023886.

A. F. Bulagang, J. Mountstephens, and J. Teo, ‘Multiclass emotion prediction using heart rate and virtual reality stimuli’, Journal of Big Data, vol. 8, no. 1, p. 12, Jan. 2021, doi: 10.1186/s40537-020-00401-x.

Y. Wang et al., ‘A systematic review on affective computing: emotion models, databases, and recent advances’, Information Fusion, vol. 83–84, pp. 19–52, 2022, doi: 10.1016/j.inffus.2022.03.009.

L. Huang, F. Xie, J. Zhao, S. Shen, W. Guang, and R. Lu, ‘Human Emotion Recognition Based on Face and Facial Expression Detection Using Deep Belief Network Under Complicated Backgrounds’, International Journal of Pattern Recognition and Artificial Intelligence, vol. 34, 05 2020, doi: 10.1142/S0218001420560108.

A. T. S. and R. M. R. Guddeti, ‘Automatic detection of students’ affective states in classroom environment using hybrid convolutional neural networks’, Education and Information Technologies, vol. 25, no. 2, pp. 1387–1415, Mar. 2020, doi: 10.1007/s10639-019-10004-6.

S. C. Gerdemann, A. Vaish, and R. Hepach, ‘Body posture as a measure of emotional valence in young children: a preregistered validation study’, Frontiers in Developmental Psychology, vol. 3–2025, 2025, doi: 10.3389/fdpys 2025.1536440.

L. B. Hinkle, K. K. Roudposhti, and V. Metsis, ‘Physiological Measurement for Emotion Recognition in Virtual Reality’, in 2019 2nd International Conference on Data Intelligence and Security (ICDIS), 2019, pp. 136–143, doi: 10.1109/ICDIS.2019.00028.

Y. Lin, Y. Lan, and S. Wang, ‘A method for evaluating the learning concentration in head-mounted virtual reality interaction’, Virtual Reality, vol. 27, no. 2, pp. 863–885, June 2023, doi: 10.1007/s10055-022-00689-5.

J. Nam, H. Chung, Y. ah Seong, and H. Lee, ‘A New Terrain in HCI: Emotion Recognition Interface using Biometric Data for an Immersive VR Experience’, arXiv [cs.HC]. 2019, doi: 10.48550/arXiv.1912.01177.

M. Gnacek et al., ‘emteqPRO—Fully Integrated Biometric Sensing Array for Non-Invasive Biomedical Research in Virtual Reality’, Frontiers in Virtual Reality, vol. 3–2022, 2022, doi: 10.3389/frvir.2022.781218.

H. Zhang, P. Yi, R. Liu, and D. Zhou, ‘Emotion Recognition from Body Movements with AS-LSTM’, in 2021 IEEE 7th International Conference on Virtual Reality (ICVR), 2021, pp. 26–32, doi: 10.1109/ICVR51878.2021.9483833.

K. R. Scherer, ‘What are emotions? And how can they be measured?’, Social Science Information, vol. 44, no. 4, pp. 695–729, 2005, doi: 10.1177/0539018405058216.

R. Somarathna, T. Bednarz, and G. Mohammadi, ‘Virtual Reality for Emotion Elicitation – A Review’, IEEE Transactions on Affective Computing, vol. 14, no. 4, pp. 2626–2645, 2023, doi: 10.1109/TAFFC.2022.3181053.

J. Reichenberger, M. Pfaller, and A. Mühlberger, ‘Gaze Behavior in Social Fear Conditioning: An Eye-Tracking Study in Virtual Reality’, Frontiers in Psychology, vol. 11–2020, 2020, doi: 10.3389/fpsyg.2020.00035.

I. Kritikos, G. Tzannetos, C. Zoitaki, S. Poulopoulou, and D. Koutsouris, ‘Anxiety detection from Electrodermal Activity Sensor with movement & interaction during Virtual Reality Simulation’, in 2019 9th International IEEE/EMBS Conference on Neural Engineering (NER), 2019, pp. 571–576, doi: 10.1109/NER.2019.8717170.

L. Petrescu et al., ‘Integrating Biosignals Measurement in Virtual Reality Environments for Anxiety Detection’, Sensors, vol. 20, no. 24, 2020, doi: 10.3390/s20247088.

S. Petrovica, A. Anohina-Naumeca, and H. K. Ekenel, ‘Emotion Recognition in Affective Tutoring Systems: Collection of Ground-truth Data’, Procedia Computer Science, vol. 104, pp. 437–444, 2017, doi: 10.1016/j.procs.2017.01.157.

M. A. Hasan, N. F. M. Noor, S. S. B. A. Rahman, and M. M. Rahman, ‘The Transition From Intelligent to Affective Tutoring System: A Review and Open Issues’, IEEE Access, vol. 8, pp. 204612–204638, 2020, doi: 10.1109/ACCESS.2020.3036990.

M. M. Bradley and P. J. Lang, ‘Measuring emotion: The self-assessment manikin and the semantic differential’, Journal of Behavior Therapy and Experimental Psychiatry, vol. 25, no. 1, pp. 49–59, 1994, doi: 10.1016/0005-7916(94)90063-9.

A. Betella and P. F. M. J. Verschure, ‘The Affective Slider: A Digital Self-Assessment Scale for the Measurement of Human Emotions’, PLOS ONE, vol. 11, no. 2, pp. 1–11, 02 2016, doi: 10.1371/journal.pone.0148037.

E. C. S. Hayashi, J. E. G. Posada, V. R. M. L. Maike, and M. C. C. Baranauskas, ‘Exploring new formats of the Self-Assessment Manikin in the design with children’, in Proceedings of the 15th Brazilian Symposium on Human Factors in Computing Systems, São Paulo, Brazil, 2016, doi: 10.1145/3033701.3033728.

Y. Bhatia, A. H. Bari, G.-S. J. Hsu, and M. Gavrilova, ‘Motion Capture Sensor-Based Emotion Recognition Using a Bi-Modular Sequential Neural Network’, Sensors, vol. 22, no. 1, 2022. doi:10.3390/s22010403.

A. S. M. H. Bari and M. L. Gavrilova, ‘Artificial Neural Network Based Gait Recognition Using Kinect Sensor’, IEEE Access, vol. 7, pp. 162708–162722, 2019, doi: 10.1109/ACCESS.2019.2952065.

S. Piana, A. Staglianò, F. Odone, and A. Camurri, ‘Adaptive Body Gesture Representation for Automatic Emotion Recognition’, ACM Trans. Interact. Intell. Syst., vol. 6, no. 1, Mar. 2016. doi:10.1145/2818740.

J. A. Russell, ‘Core affect and the psychological construction of emotion’, Psychological Review, vol. 110, no. 1, pp. 145–172, 2003, doi: 10.1037/0033-295X.110.1.145.

V. Holzwarth et al., ‘Towards estimating affective states in Virtual Reality based on behavioral data’, Virtual Reality, vol. 25, no. 4, pp. 1139–1152, Dec. 2021, doi: 10.1007/s10055-021-00518-1.

L. Sabater, M. Guasch, P. Ferré, I. Fraga, and J. A. Hinojosa, ‘Spanish affective normative data for 1,406 words rated by children and adolescents (SANDchild)’, Behavior Research Methods, vol. 52, no. 5, pp. 1939–1950, Oct. 2020, doi: 10.3758/s13428-020-01377-5.

A. Kleinsmith and N. Bianchi-Berthouze, ‘Affective Body Expression Perception and Recognition: A Survey’, IEEE Transactions on Affective Computing, vol. 4, no. 1, pp. 15–33, 2013, doi: 10.1109/T-AFFC.2012.16.

J. Tracy and D. Randles, ‘Four Models of Basic Emotions: A Review of Ekman and Cordaro, Izard, Levenson, and Panksepp and Watt’, Emotion Review, vol. 3, pp. 397–405, 09 2011. doi: 10.1177/1754073911410747.

P. Ekman, ‘An argument for basic emotions’, Cognition and Emotion, vol. 6, no. 3–4, pp. 169–200, 1992. doi:10.1080/02699939208411068.

R. Plutchik, ‘A psychoevolutionary theory of emotions’, Social Science Information, vol. 21, no. 4–5, pp. 529–553, 1982. doi:10.1177/053901882021004003.

J. Russell, A Circumplex Model of Affect, J. Pers. Soc. Psychol. 39 1980 1161–1178. doi:10.1037/h0077714.

T. Randhavane, U. Bhattacharya, K. Kapsaskis, K. Gray, A. Bera, and D. Manocha, ‘Identifying Emotions from Walking using Affective and Deep Features’, arXiv [cs.CV]. 2020, doi: 10.48550/arXiv.1906.11884.

Z. Zhang, J. M. Fort, and L. Giménez Mateu, ‘Facial expression recognition in virtual reality environments: challenges and opportunities’, Frontiers in Psychology, vol. 14–2023, 2023, doi: 10.3389/fpsyg.. 2023.1280136.

C. Wang, T. S. Kumar, W. De Raedt, G. Camps, H. Hallez, and B. Vanrumste, ‘Drinking Gesture Detection Using Wrist-Worn IMU Sensors with Multi-Stage Temporal Convolutional Network in Free-Living Environments’, in 2022 44th Annual International Conference of the IEEE Engineering in Medicine & Biology Society (EMBC), 2022, pp. 1778–1782, doi: 10.1109/EMBC48229.2022.9871817.