Energy-efficient Navigation in Unknown Static Flow Fields using Reinforcement Learning

Keywords:

Autonomous Agents, Reinforcement Learning, Intelligent TransportationAbstract

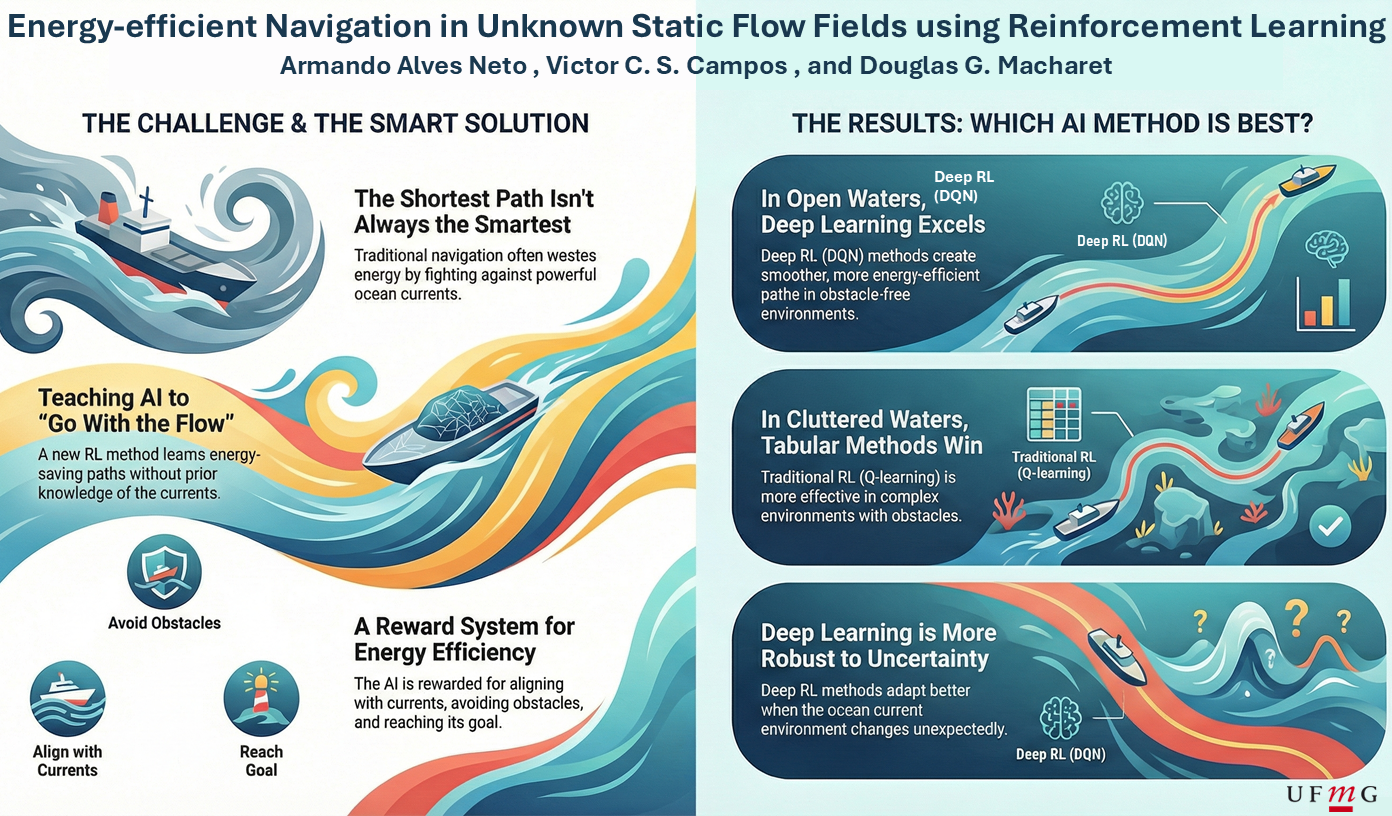

Energy efficiency is becoming increasingly critical in the Maritime Industry due to expectations of cost reduction, environmental sustainability, regulatory compliance, trade volume growth, and safety enhancement. In this context, Reinforcement Learning (RL) methods have gained significant attention for solving control and optimization problems. Recent advancements have demonstrated effectiveness in diverse domains, including logistics, scheduling, and resource allocation. Hence, in this paper, we address the problem of energy-efficient navigation of vessels in unknown static flow fields using RL. We propose a reward function that minimizes control effort under flow influence and evaluate two tabular and two function-approximation methods across different scenarios with the state-of-the-art literature. Finally, we provide a zero-shot sim-to-sim analysis to evaluate the impact of environmental uncertainty on our proposed method.

Downloads

References

N. Gu, D. Wang, Z. Peng, J. Wang, and Q.-L. Han, “Advances in line-of-sight guidance for path following of autonomous marine vehicles: An overview,” IEEE Trans. on Systems, Man, and Cybernetics: Systems, vol. 53, no. 1, pp. 12–28, 2023. https://doi.org/10.1109/TSMC.2022.3162862.

S. M. LaValle, Planning Algorithms. New York, NY, USA: Cambridge University Press, 2006. https://doi.org/10.1017/CBO9780511546877.

K. Mallory, M. A. Hsieh, E. Forgoston, and I. B. Schwartz, “Distributed allocation of mobile sensing swarms in gyre flows,” Nonlin. Processes Geophys., vol. 20, no. 5, pp. 657–668, 2013. https://doi.org/10.5194/npg-20-657-2013.

C. R. Heckman, I. B. Schwartz, and M. A. Hsieh, “Toward efficient navigation in uncertain gyre-like flows,” The Int. J. of Robotics Research, vol. 34, no. 13, pp. 1590–1603, 2015. https://doi.org/10.1177/0278364915585396.

A. Alvarez, A. Caiti, and R. Onken, “Evolutionary path planning for autonomous underwater vehicles in a variable ocean,” IEEE J. of Oceanic Engineering, vol. 29, no. 2, pp. 418–429, 2004. https://doi.org/10.1109/JOE.2004.827837.

T. Lolla, P. F. Lermusiaux, M. P. Ueckermann, and P. J. Haley, “Time-optimal path planning in dynamic flows using level set equations: theory and schemes,” Ocean Dynamics, vol. 64, pp. 1373–1397, 2014. https://doi.org/10.1007/s10236-014-0757-y.

D. Kularatne, S. Bhattacharya, and M. A. Hsieh, “Going with the flow: a graph based approach to optimal path planning in general flows,” Autonomous Robots, vol. 42, pp. 1369–1387, 2018. https://doi.org/10.1007/s10514-018-9741-6.

M. K. Nutalapati, S. Joshi, and K. Rajawat, “Online utility-optimal trajectory design for time-varying ocean environments,” in Int. Conf. on Robotics and Automation (ICRA), pp. 6853–6859, IEEE, 2019. https://doi.org/10.1109/ICRA.2019.8794365.

H. R. Karimi and Y. Lu, “Guidance and control methodologies for marine vehicles: A survey,” Control Engineering Practice, vol. 111, p. 104785, 2021. https://doi.org/10.1016/j.conengprac.2021.104785.

B. Garau, A. Alvarez, and G. Oliver, “Path Planning of Autonomous Underwater Vehicles in Current Fields with Complex Spatial Variability: an A* Approach,” IEEE Int. Conf. on Robotics and Automation (ICRA), pp. 194–198, 2005. https://doi.org/10.1109/ROBOT.2005.1570118.

T.-B. Koay and M. Chitre, “Energy-efficient path planning for fully propelled auvs in congested coastal waters,” in MTS/IEEE OCEANS, pp. 1–9, 2013. https://doi.org/10.1109/OCEANS-Bergen.2013.6608168.

M. Otte, W. Silva, and E. Frew, “Any-time path-planning: Time-varying wind field + moving obstacles,” in IEEE Int. Conf. on Robotics and Automation, pp. 2575–2582, 2016. https://doi.org/10.1109/ICRA.2016.7487414.

K. C. To, K. M. B. Lee, C. Yoo, S. Anstee, and R. Fitch, “Streamlines for motion planning in underwater currents,” in Int. Conf. on Robotics and Automation, pp. 4619–4625, IEEE, 2019. https://doi.org/10.1109/ICRA.2019.8793567.

D. Kularatne, H. Hajieghrary, and M. A. Hsieh, “Optimal path planning in time-varying flows with forecasting uncertainties,” in IEEE Int. Conf. on Robotics and Automation, pp. 4857–4864, IEEE, 2018. https://doi.org/10.1109/ICRA.2018.8460221.

C. Yoo, J. J. H. Lee, S. Anstee, and R. Fitch, “Path planning in uncertain ocean currents using ensemble forecasts,” in IEEE Int. Conf. on Robotics and Automation, pp. 8323–8329, IEEE, 2021. https://doi.org/10.1109/ICRA48506.2021.9561626.

V. Mnih, K. Kavukcuoglu, D. Silver, A. Rusu, J. Veness, M. Bellemare, A. Graves, M. Riedmiller, A. Fidjeland, G. Ostrovski, S. Petersen, C. Beattie, A. Sadik, I. Antonoglou, H. King, D. Kumaran, D. Wierstra, S. Legg, and D. Hassabis, “Human-level control through Deep Reinforcement Learning,” Nature, vol. 518, no. 7540, pp. 529–533, 2015. https://doi.org/10.1038/nature14236.

Y. Qiao, J. Yin, W. Wang, F. Duarte, J. Yang, and C. Ratti, “Survey of Deep Learning for Autonomous Surface Vehicles in Marine Environments,” IEEE Trans. on Intelligent Transportation Systems, vol. 24, no. 4, pp. 3678–3701, 2023. https://doi.org/10.1109/TITS.2023.3235911.

B. Yoo and J. Kim, “Path optimization for marine vehicles in ocean currents using reinforcement learning,” J. of Marine Science and Technology, vol. 21, pp. 334–343, Jun 2016. https://doi.org/10.1007/s00773-015-0355-9.

W. Lan, X. Jin, X. Chang, T. Wang, H. Zhou, W. Tian, and L. Zhou, “Path planning for underwater gliders in time-varying ocean current using deep reinforcement learning,” Ocean Engineering, vol. 262, p. 112226, 2022. https://doi.org/10.1016/j.oceaneng.2022.112226.

Z. Chu, F. Wang, T. Lei, and C. Luo, “Path Planning Based on Deep Reinforcement Learning for Autonomous Underwater Vehicles Under Ocean Current Disturbance,” IEEE Trans. on Intelligent Vehicles, vol. 8, no. 1, pp. 108–120, 2023. https://doi.org/10.1109/TIV.2022.3153352.

R. S. Sutton and A. G. Barto, Reinforcement Learning: An Introduction. The MIT Press, second ed., 2018.

V. Mnih, K. Kavukcuoglu, D. Silver, A. Graves, I. Antonoglou, D. Wierstra, and M. Riedmiller, “Playing Atari with Deep Reinforcement Learning,” 2013. https://doi.org/10.48550/arXiv.1312.5602.

B. Garau, A. Alvarez, and G. Oliver, “AUV navigation through turbulent ocean environments supported by onboard H-ADCP,” in IEEE Int. Conf. on Robotics and Automation, pp. 3556–3561, 2006. https://doi.org/10.1109/ROBOT.2006.1642245.

A. Mansfield, D. G. Macharet, and M. A. Hsieh, “Energy-efficient orienteering problem in the presence of ocean currents,” in IEEE/RSJ Int. Conf. on Intelligent Robots and Systems, pp. 10081–10087, 2022. https://doi.org/10.1109/IROS47612.2022.9981818.