Boosting fine-grained feature fusion in 3D point cloud registration

Keywords:

3D point cloud, point cloud registration, granular featureAbstract

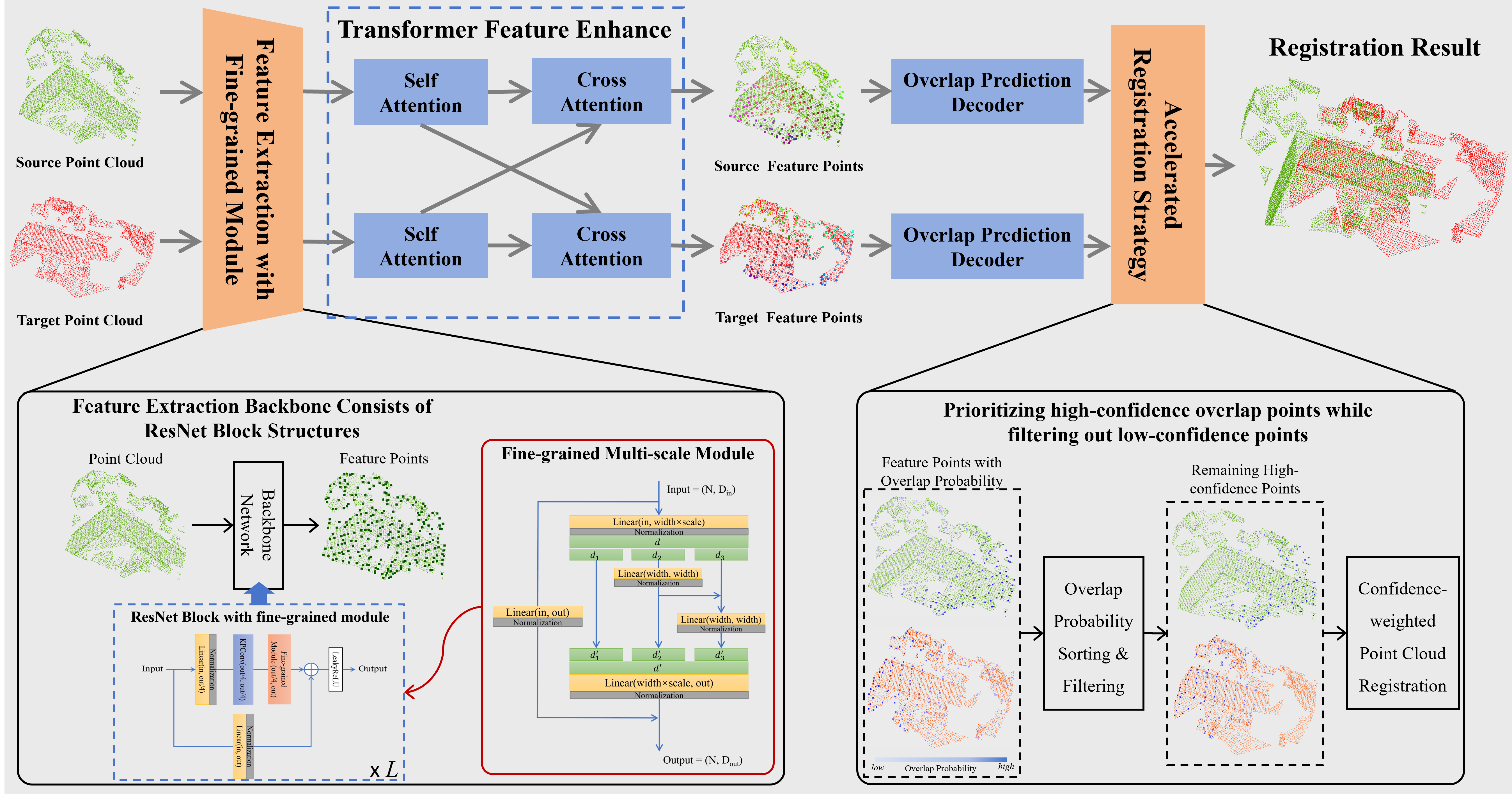

Existing point cloud registration methods have achieved significant progress through transformer architecture. However, these methods often overlook the fine-grained structural information in local features, which limits their adaptability to complex scenes. To address this issue, we propose a fine-grained module that enhances the receptive field through hierarchical feature fusion. This approach provides finer-grained feature information and improves the accuracy of point cloud registration. First, a multi-scale hierarchical feature fusion module is designed to capture fine-grained feature and expand the receptive field. Second, this module is integrated into the REGTR backbone network to enhance feature correlation. Finally, an efficient and accurate registration strategy is proposed by enhancing the contribution of high-probability overlapping features. Comprehensive experiments on both indoor (3DMatch, ModelNet40) and outdoor (MCD) benchmarks demonstrate the method's effectiveness. Compared with REGTR baseline, our method achieves relative error reductions of 17.6% and 8.9% on 3DMatch and ModelNet40 respectively, while maintaining competitive computational efficiency. Consistent performance improvement on the outdoor MCD dataset further validates the method's effectiveness across diverse scenarios.

Downloads

References

A. de Carvalho Santana and A. Silva Macedo, “Use of lidar sensors for non-contact, real-time measurement of ore mass on belt conveyors,” IEEE Latin America Transactions, vol. 22, no. 1, pp. 63–70, 2024, doi: 10.1109/TLA.2024.10375737.

J. Gaia, E. Orosco, F. Rossomando, and C. Soria, “Mapping the landscape of slam research: A review,” IEEE Latin America Transactions, ol. 21, no. 12, pp. 1313–1336, 2023, doi: 10.1109/TLA.2023.10305240.

M. Yuan, X. Huang, K. Fu, Z. Li, and M. Wang, “Boosting 3d point cloud registration by transferring multi-modality knowledge,” 2023 IEEE International Conference on Robotics and Automation (ICRA), pp. 11 734–11 741, 2023, doi: 10.1109/ICRA48891.2023.10161411.

X. Huang, G. Mei, J. Zhang, and R. Abbas, “A comprehensive survey on point cloud registration,” ArXiv, vol. abs/2103.02690, 2021, doi: arXiv:2103.02690.

Y.-X. Zhang, J. Gui, X. Cong, X. Gong, and W. Tao, “A comprehensive survey and taxonomy on point cloud registration based on deep learning,” ArXiv, vol. abs/2404.13830, 2024, doi: arXiv:2404.13830.

M. Lyu, J. Yang, Z. Qi, R. Xu, and J. Liu, “Rigid pairwise 3d point cloud registration: A survey,” Pattern Recognit., vol. 151, p. 110408, 2024, doi: 10.1016/j.patcog.2024.110408.

P. Besl and N. D. McKay, “A method for registration of 3-d shapes,” IEEE Transactions on Pattern Analysis and Machine Intelligence, vol. 14, no. 2, pp. 239–256, 1992, doi: 10.1109/34.121791.

A. W. Fitzgibbon, “Robust registration of 2d and 3d point sets,” Image Vis. Comput., vol. 21, pp. 1145–1153, 2003, doi: 10.1016/j.imavis.2003.09.004.

S. Granger and X. Pennec, “Multi-scale em-icp: A fast and robust approach for surface registration,” Computer Vision - ECCV 2002, 7th

European Conference on Computer Vision, Copenhagen, Denmark, May 28-31, 2002, Proceedings, Part IV, 2002, doi: 10.1007/3-540-47979-

R. B. Rusu, N. Blodow, and M. Beetz, “Fast point feature histograms (fpfh) for 3d registration,” 2009 IEEE International Conference on Robotics and Automation, pp. 3212–3217, 2009, doi: 10.1109/ROBOT.2009.5152473.

S. Salti, F. Tombari, and L. di Stefano, “Shot: Unique signatures of histograms for surface and texture description,” Comput. Vis. Image Underst., vol. 125, pp. 251–264, 2014, doi: 10.1016/j.cviu.2014.04.011.

C. B. Choy, J. Park, and V. Koltun, “Fully convolutional geometric features,” 2019 IEEE/CVF International Conference on Computer Vision (ICCV), pp. 8957–8965, 2019, doi: 10.1109/ICCV.2019.00905.

S. Horache, J.-E. Deschaud, and F. Goulette, “3d point cloud registration with multi-scale architecture and unsupervised transfer learning,” 2021 International Conference on 3D Vision (3DV), pp. 1351–1361, 2021, doi: 10.1109/3DV53792.2021.00142.

A. S. Hatem, Y. Qian, and Y. Wang, “Point-tta: Test-time adaptation for point cloud registration using multitask meta-auxiliary learning,” 2023 IEEE/CVF International Conference on Computer Vision (ICCV), pp. 16 448–16 458, 2023, doi: 10.1109/ICCV51070.2023.01512.

P. Vial, M. Malagon, R. Segura, N. Palomeras, and M. Car´reras, “Gmm registration: a probabilistic scan matching approach for sonar-based auv navigation,” 2023 IEEE International Conference on Robotics and Automation (ICRA), pp. 1033–1039, 2023, doi: 10.1109/ICRA48891.2023.10160697.

J. Zhang, F. Xie, L. Sun, P. Zhang, Z. Zhang, J. Chen, F. Chen, and M. Yi, “Multi-view point cloud registration based on improved ndt algorithm and odm optimization method,” IEEE Robotics and Automation Letters, vol. 9, pp. 6816–6823, 2024, doi: 10.1109/LRA.2024.3408086.

C. B. Choy, W. Dong, and V. Koltun, “Deep global registration,” 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp. 2511–2520, 2020, doi: 10.1109/CVPR42600.2020.00259.

M. A. Fischler and R. C. Bolles, “Random sample consensus: a paradigm for model fitting with applications to image analysis and automated cartography,” Commun. ACM, vol. 24, pp. 381–395, 1981, doi: 10.1016/B978-0-08-051581-6.50070-2.

Z. J. Yew and G. H. Lee, “Regtr: End-to-end point cloud correspondences with transformers,” 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp. 6667–6676, 2022, doi: 10.1109/CVPR52688.2022.00656.

X. Zhang, J. Yang, S. Zhang, and Y. Zhang, “3d registration with maximal cliques,” 2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp. 17 745–17 754, 2023, doi: 10.1109/CVPR52729.2023.01702.

X. Huang, S. Li, Y. Zuo, Y. Fang, J. Zhang, and X. Zhao, “Unsupervised point cloud registration by learning unified gaussian mixture models,” IEEE Robotics and Automation Letters, vol. 7, pp. 7028–7035, 2022, doi: 10.1109/LRA.2022.3180443.

G. Mei, H. Tang, X. Huang, W. Wang, J. Liu, J. Zhang, L. V. Gool, and Q. Wu, “Unsupervised deep probabilistic approach for partial point cloud registration,” 2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp. 13 611–13 620, 2023, doi: 10.1109/CVPR52729.2023.01308.

H. Thomas, C. Qi, J.-E. Deschaud, B. Marcotegui, F. Goulette, and L. J. Guibas, “Kpconv: Flexible and deformable convolution for point

clouds,” 2019 IEEE/CVF International Conference on Computer Vision (ICCV), pp. 6410–6419, 2019, doi: 10.1109/ICCV.2019.00651.

C. Qi, H. Su, K. Mo, and L. J. Guibas, “Pointnet: Deep learning on point sets for 3d classification and segmentation,” 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 77–85, 2016, doi: 10.1109/CVPR.2017.16.

F. Poiesi and D. Boscaini, “Distinctive 3d local deep descriptors,” 2020 25th International Conference on Pattern Recognition (ICPR), pp. 5720–5727, 2020, doi: 10.1109/ICPR48806.2021.9411978.

H. Chen, B. Chen, Z. Zhao, and B. Song, “Point cloud registration based on learning gaussian mixture models with global-weighted local representations,” IEEE Geoscience and Remote Sensing Letters, vol. 20, pp. 1–5, 2023, doi: 10.1109/LGRS.2023.3256005.

Z. Yang, J. Z. Pan, L. Luo, X. Zhou, K. Grauman, and Q.-X. Huang, “Extreme relative pose estimation for rgb-d scans via scene completion,” 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp. 4526–4535, 2018, doi: 10.1109/CVPR.2019.00466.

X. Huang, G. Mei, and J. Zhang, “Feature-metric registration: A fast semi-supervised approach for robust point cloud registration without correspondences,” 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp. 11 363–11 371, 2020, doi: 10.1109/CVPR42600.2020.01138.

G. Chen, M. Wang, L. Yuan, Y. Yang, and Y. Yue, “Rethinking point cloud registration as masking and reconstruction,” 2023 IEEE/CVF

International Conference on Computer Vision (ICCV), pp. 17 671–17 681, 2023, doi: 10.1109/ICCV51070.2023.01624.

X. Bai, Z. Luo, L. Zhou, H. Fu, L. Quan, and C.-L. Tai, “D3feat: Joint learning of dense detection and description of 3d local features,” 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp. 6358–6366, 2020, doi: 10.1109/CVPR42600.2020.00639.

Z. Qiao, Z. Yu, H. Yin, and S. Shen, “Pyramid semantic graphbased global point cloud registration with low overlap,” 2023 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pp. 11 202–11 209, 2023, doi: 10.1109/IROS55552.2023.10341394.

S. Gao, M.-M. Cheng, K. Zhao, X. Zhang, M.-H. Yang, and P. H. S. Torr, “Res2net: A new multi-scale backbone architecture,” IEEE Transactions on Pattern Analysis and Machine Intelligence, vol. 43, pp. 652–662, 2019, doi: 10.1109/TPAMI.2019.2938758.

A. Zeng, S. Song, M. Nießner, M. Fisher, J. Xiao, and T. A. Funkhouser, “3dmatch: Learning local geometric descriptors from rgb-d reconstructions,” 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 199–208, 2016, doi: 10.1109/CVPR.2017.29.

Z. Wu, S. Song, A. Khosla, F. Yu, L. Zhang, X. Tang, and J. Xiao, “3d shapenets: A deep representation for volumetric shapes,” 2015 IEEE

Conference on Computer Vision and Pattern Recognition (CVPR), pp. 1912–1920, 2014, doi: 10.1109/CVPR.2015.7298801.

T.-M. Nguyen, S. Yuan, T. H. Nguyen, P. Yin, H. Cao, L. Xie, M. K. Wozniak, P. Jensfelt, M. Thiel, J. Ziegenbein, and N. Blunder, “Mcd:

Diverse large-scale multi-campus dataset for robot perception,” ArXiv, vol. abs/2403.11496, 2024, doi: 10.1109/CVPR52733.2024.02105.

S. Huang, Z. Gojcic, M. M. Usvyatsov, A. Wieser, and K. Schindler, “Predator: Registration of 3d point clouds with low overlap,” 2021

IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp. 4265–4274, 2020, doi: 10.1109/CVPR46437.2021.00425.

M. Grupp, “evo: Python package for the evaluation of odometry and slam.” 2017. [Online]. Available: https://github.com/MichaelGrupp/evo

H. Wang, Y. Liu, Q. Hu, B. Wang, J. Chen, Z. Dong, Y. Guo, W. Wang, and B. Yang, “Roreg: Pairwise point cloud registration with oriented descriptors and local rotations,” IEEE Transactions on Pattern Analysis and Machine Intelligence, vol. 45, pp. 10 376–10 393, 2023, doi: 10.1109/TPAMI.2023.3244951.