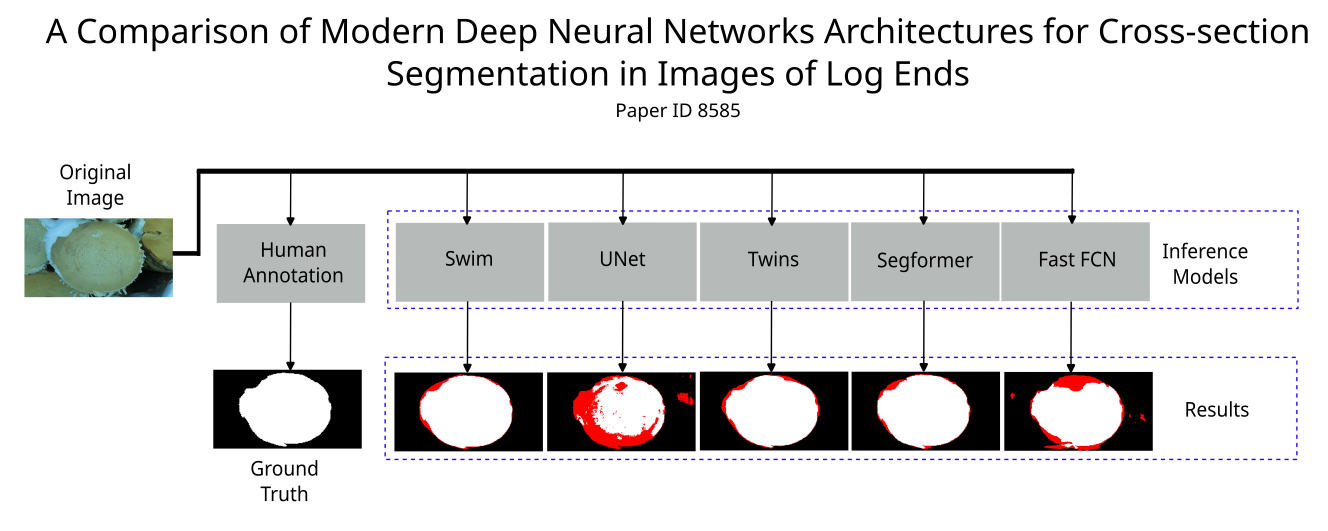

A Comparison of Modern Deep Neural Networks Architectures for Cross-section Segmentation in Images of Log Ends

Keywords:

CNNs, Deep neural networks, Segmentation Transformers, Wood Log EndsAbstract

The semantic segmentation of log faces constitutes the initial step towards subsequent quality analyses of timber,

such as quantifying properties like mechanical strength, durability, and the aesthetic attributes of growth rings. In the literature, works based on both classical and machine learning approaches for this purpose can be found. However, more recent architectures and techniques, such as ViTs or even the latest CNNs, have not yet been thoroughly evaluated. This study presents a comparison of modern deep neural network architectures for cross-section segmentation in images of log ends. The results obtained indicate that the networks using the ViTs considered in this work outperformed those previously evaluated in terms of both accuracy and processing time.

Downloads

References

R. Decelle and E. Jalilian, “Neural networks for cross-section segmentation in raw images of log ends,” in 2020 IEEE 4th International

Conference on Image Processing, Applications and Systems (IPAS), 2020, pp. 131–137.

N. Samdangdech and S. Phiphobmongkol, “Log-end cut-area detection in images taken from rear end of eucalyptus timber trucks,” in 2018 15th International Joint Conference on Computer Science and Software Engineering (JCSSE). IEEE, 2018, pp. 1–6.

F. A. Nack, “Sistema de medição do volume de toras de madeira utilizando visão computacional.” Blumenau, SC, 2021. [Online].

Available: https://repositorio.ufsc.br/handle/123456789/228449

B. Galsgaard, D. H. Lundtoft, I. Nikolov, K. Nasrollahi, and T. B. Moeslund, “Circular hough transform and local circularity measure for

weight estimation of a graph-cut based wood stack measurement,” in 2015 IEEE Winter Conference on Applications of Computer Vision.

IEEE, 2015, pp. 686–693.

R. Schraml and A. Uhl, “Similarity based cross-section segmentation in rough log end images,” in Artificial Intelligence Applications and Innovations: 10th IFIP WG 12.5 International Conference, AIAI 2014, Rhodes, Greece, September 19-21, 2014. Proceedings 10. Springer, 2014, pp. 614–623.

B. Kitchenham, “Procedures for performing systematic reviews,” Keele, UK, Keele University, vol. 33, no. 2004, pp. 1–26, 2004.

[Online]. Available: https://citeseerx.ist.psu.edu/document?repid=rep1& type=pdf&doi=29890a936639862f45cb9a987dd599dce9759bf5

F. Yu and V. Koltun, “Multi-scale context aggregation by dilated convolutions,” arXiv preprint arXiv:1511.07122, 2015.

O. Ronneberger, P. Fischer, and T. Brox, “U-net: Convolutional networks for biomedical image segmentation,” in Medical Image Computing and Computer-Assisted Intervention–MICCAI 2015: 18th International Conference, Munich, Germany, October 5-9, 2015, Proceedings, Part III 18. Springer, 2015, pp. 234–241.

K. He, G. Gkioxari, P. Dollár, and R. Girshick, “Mask r-cnn,” 2018.

G. Lin, A. Milan, C. Shen, and I. Reid, “Refinenet: Multi-path refinement networks for high-resolution semantic segmentation,” in 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2017, pp. 5168–5177.

V. Badrinarayanan, A. Kendall, and R. Cipolla, “Segnet: A deep convolutional encoder-decoder architecture for image segmentation,” IEEE Transactions on Pattern Analysis and Machine Intelligence, vol. 39, no. 12, pp. 2481–2495, 2017.

D. Arthur and S. Vassilvitskii, “K-means++: The advantages of careful seeding,” in Proceedings of the Eighteenth Annual ACM-SIAM Symposium on Discrete Algorithms, ser. SODA ’07. USA: Society for Industrial and Applied Mathematics, 2007, p. 1027–1035.

T. Chan and L. Vese, “Active contours without edges,” IEEE Transactions on Image Processing, vol. 10, no. 2, pp. 266–277, 2001.

H. Wu, J. Zhang, K. Huang, K. Liang, and Y. Yu, “Fastfcn: Rethinking dilated convolution in the backbone for semantic segmentation,” arXiv preprint arXiv:1903.11816, 2019.

E. Xie, W. Wang, Z. Yu, A. Anandkumar, J. M. Alvarez, and P. Luo,“Segformer: Simple and efficient design for semantic segmentation with transformers,” 2021.

Z. Liu, Y. Lin, Y. Cao, H. Hu, Y. Wei, Z. Zhang, S. Lin, and B. Guo, “Swin transformer: Hierarchical vision transformer using shifted windows,” in 2021 IEEE/CVF International Conference on Computer Vision (ICCV). Los Alamitos, CA, USA: IEEE Computer Society, oct 2021, pp. 9992–10 002.

X. Chu, Z. Tian, Y. Wang, B. Zhang, H. Ren, X. Wei, H. Xia, and C. Shen, “Twins: Revisiting the design of spatial attention in vision transformers,” 2021.

L.-C. Chen, G. Papandreou, I. Kokkinos, K. Murphy, and A. L. Yuille, “Deeplab: Semantic image segmentation with deep convolutional nets, atrous convolution, and fully connected crfs,” IEEE Transactions on Pattern Analysis and Machine Intelligence, vol. 40, no. 4, pp. 834 848, 2018.

A. Dosovitskiy, L. Beyer, A. Kolesnikov, D. Weissenborn, X. Zhai, T. Unterthiner, M. Dehghani, M. Minderer, G. Heigold, S. Gelly, J. Uszkoreit, and N. Houlsby, “An image is worth 16x16 words: Transformers for image recognition at scale,” 2021.

T. Xiao, Y. Liu, B. Zhou, Y. Jiang, and J. Sun, “Unified perceptual parsing for scene understanding,” 2018.

F. A. Nack, M. E. Stivanello, and M. R. Stemmer, “Modern wood segmentation,” https://github.com/NackFelipe/ModernWoodSegmentation, 2024.

H. Zhao, J. Shi, X. Qi, X. Wang, and J. Jia, “Pyramid scene parsing network,” in Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), July 2017.

J. Long, E. Shelhamer, and T. Darrell, “Fully convolutional networks for semantic segmentation,” in Proceedings of the IEEE conference on computer vision and pattern recognition, 2015, pp. 3431–3440.

M. Contributors, “MMSegmentation: Openmmlab semantic segmentation toolbox and benchmark,” https://github.com/open-mmlab/mmsegmentation, 2020.