Combining deep learning model compression techniques

Keywords:

Deep learning, model compression, dark knowledge distillation, pruning, quantizationAbstract

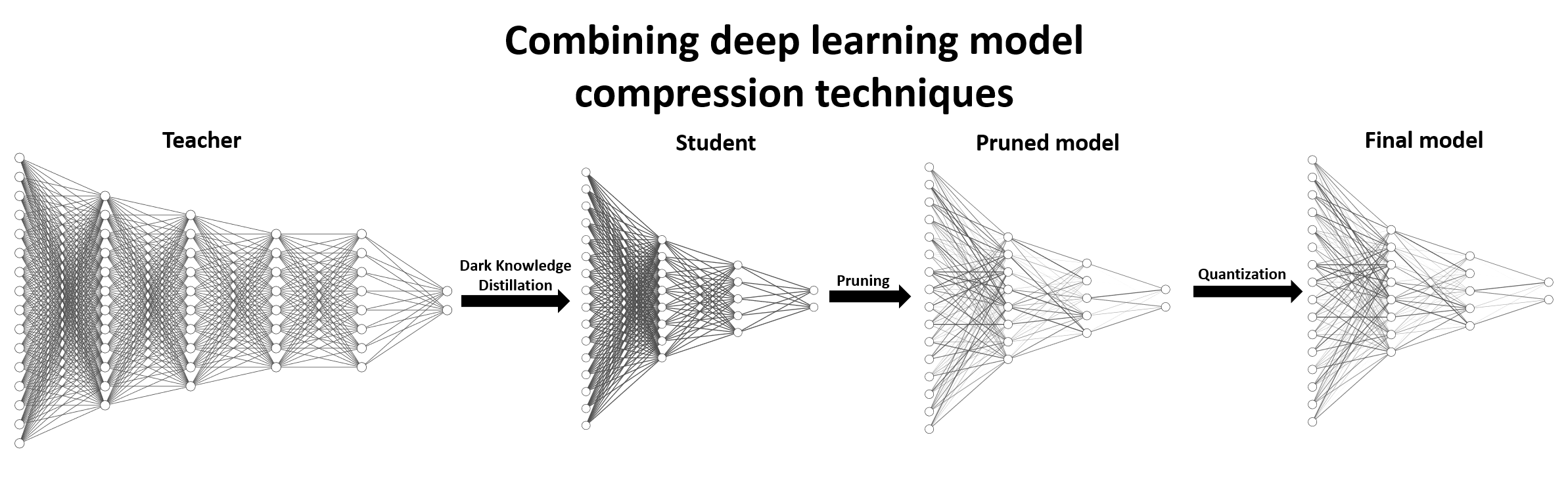

In this article, we evaluate the performance of combining several model compression techniques. The techniques assessed were dark knowledge distillation, pruning, and quantization. We found that in the scenario in which we developed the experiments, classification of chest x-rays, the combination of these three techniques yielded a new model capable of aggregating the individual advantages of each one. In the experiments we used a combination of deep models with 95.05% accuracy, a value higher than that reported in some related works but lower than the state of the art, whose accuracy is 96.39%. The accuracy of the compressed model in turn was 90.86%, a small loss compared to the gain obtained from the reduction, in bytes, in relation to the size of the original model. The size has been reduced from 841MB to 40KB, which opens up the possibility for using deep models in edge computing applications.

Downloads

References

P. Rajpurkar, J. Irvin, R. L. Ball, K. Zhu, B. Yang, H. Mehta, T. Duan, D. Ding, A. Bagul, C. P. Langlotz, et al., “Deep learning for chest radiograph diagnosis: A retrospective comparison of the chexnext algorithm to practicing radiologists,” PLoS medicine, vol. 15, no. 11, p. e1002686, 2018.

G. Hinton, O. Vinyals, and J. Dean, “Distilling the knowledge in a neural network,” arXiv preprint arXiv:1503.02531, 2015. [3] S. Han, H. Mao, and W. J. Dally, “Deep compression: Compressing

deep neural networks with pruning, trained quantization and huffman coding,” arXiv preprint arXiv:1510.00149, 2015.

B. Jacob, S. Kligys, B. Chen, M. Zhu, M. Tang, A. Howard, H. Adam, and D. Kalenichenko, “Quantization and training of neural networks for efficient integer-arithmetic-only inference,” in Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 2704– 2713, 2018.

W. H. O. WHO, “Standardization of interpretation of chest radiographs for the diagnosis of pneumonia in children,” tech. rep., World Health Organization, 2001. [6] T. Franquet, “Imaging of pneumonia: trends and algorithms,” European Respiratory Journal, vol. 18, no. 1, pp. 196–208, 2001.

J. V. Silva and L. Matos, “Detecção de pneumonia usando redes neurais convolucionais treinadas com destilação do conhecimento obscuro,” in Anais da XX Escola Regional de Computação Bahia, Alagoas e Sergipe, pp. 51–60, SBC, 2020.

S. A. Janowsky, “Pruning versus clipping in neural networks,” Physical Review A, vol. 39, no. 12, p. 6600, 1989. [9] D. Blalock, J. J. G. Ortiz, J. Frankle, and J. Guttag, “What is the state of neural network pruning?,” arXiv preprint arXiv:2003.03033, 2020. [10] S. Gupta, A. Agrawal, K. Gopalakrishnan, and P. Narayanan, “Deep learning with limited numerical precision,” in International conference on machine learning, pp. 1737–1746, PMLR, 2015.

I. Hubara, M. Courbariaux, D. Soudry, R. El-Yaniv, and Y. Bengio, “Binarized neural networks,” in Proceedings of the 30th International Conference on Neural Information Processing Systems, pp. 4114–4122, 2016.

D. S. Kermany, M. Goldbaum, W. Cai, C. C. Valentim, H. Liang, S. L. Baxter, A. McKeown, G. Yang, X. Wu, F. Yan, et al., “Identifying medical diagnoses and treatable diseases by image-based deep learning,”

Cell, vol. 172, no. 5, pp. 1122–1131, 2018.

R. Caruana, S. Lawrence, and L. Giles, “Overfitting in neural nets: Backpropagation, conjugate gradient, and early stopping,” Advances in neural information processing systems, pp. 402–408, 2001.

V. Chouhan, S. K. Singh, A. Khamparia, D. Gupta, P. Tiwari, C. Moreira, R. Damaševičius, and V. H. C. De Albuquerque, “A novel transfer learning based approach for pneumonia detection in chest x-ray images,” Applied Sciences, vol. 10, no. 2, p. 559, 2020.

A. Krizhevsky, I. Sutskever, and G. E. Hinton, “Imagenet classification with deep convolutional neural networks,” in Advances in neural information processing systems, pp. 1097–1105, 2012.

G. Huang, Z. Liu, L. Van Der Maaten, and K. Q. Weinberger, “Densely connected convolutional networks,” in Proceedings of the IEEE conference on computer vision and pattern recognition, pp. 4700–4708, 2017.

K. He, X. Zhang, S. Ren, and J. Sun, “Deep residual learning for image recognition,” in Proceedings of the IEEE conference on computer vision and pattern recognition, pp. 770–778, 2016.

K. Simonyan and A. Zisserman, “Very deep convolutional networks for large-scale image recognition,” arXiv preprint arXiv:1409.1556, 2014.

C. Szegedy, W. Liu, Y. Jia, P. Sermanet, S. Reed, D. Anguelov, D. Erhan, V. Vanhoucke, and A. Rabinovich, “Going deeper with convolutions,” in Proceedings of the IEEE conference on computer vision and pattern recognition, pp. 1–9, 2015.

D. P. Kingma and J. Ba, “Adam: A method for stochastic optimization,” arXiv preprint arXiv:1412.6980, 2014.

C. Szegedy, V. Vanhoucke, S. Ioffe, J. Shlens, and Z. Wojna, “Rethinking the inception architecture for computer vision,” in Proceedings of the IEEE conference on computer vision and pattern recognition, pp. 2818– 2826, 2016.