Urban dual mode video detection system based on fisheye and PTZ cameras

Keywords:

Fisheye, PTZ camera, ONVIF, camera calibration, omnidirectional cameraAbstract

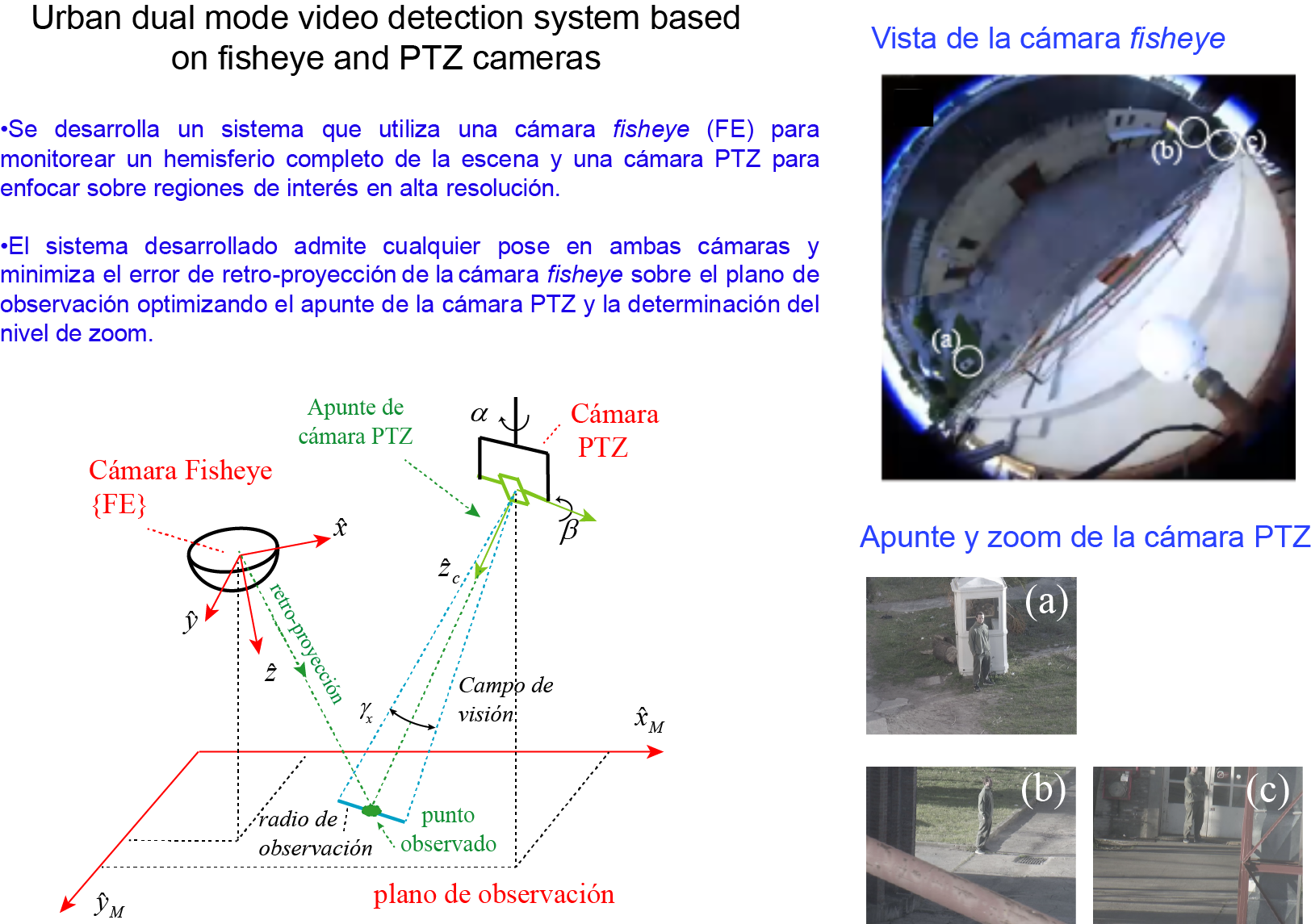

This work presents an artificial vision-based monitoring system for urban environments. It comprises a fisheye camera monitoring the scene’s 180ºx360º hemisphere and a Pan-Tilt-Zoom camera capturing narrower regions of interest in high-resolution. The ONVIF protocol standard is used to interface both IP-cameras, allowing for the integration of camera control (image acquisition and movement) and geometric calculations on a single device. The events of interest (motion of vehicles and pedestrians) are assumed to happen on the ground plane. This assumption is required to solve the back-projection, the function that maps coordinates in the highly distorted images of the fisheye camera to the ground plane. A calibration strategy estimates the poses of the cameras without placing restrictions on their orientations or relative distance. It optimizes the back-projection error in the ground plane instead of the re-projection error in the image. Finally, a simple pointing and zoom adjustment strategy controls the Pan-Tilt-Zoom camera. The system is tested in controlled laboratory conditions and shows accurate outdoor performance for pedestrian observation.

Downloads

References

G. H. Minari et al., Anomalies Identification in Images from Security Video Cameras Using Mask R-CNN. IEEE Latin America Transactions, 18(03), pp. 530-536, 2020.

D. Stanganelli, D. E. Oliva, M. Noblía, and F. Safar. Calibración de una cámara fisheye comercial con el modelo unificado para la observación de objetos múltiples. In 2014 IEEE Biennial Congress of Argentina (ARGENCON), pp. 147-152, 2014.

S. I. Arroyo, F. Safar, and D. Oliva. Probabilidad de infracción de velocidad de vehículos utilizando visión artificial en cámaras de campo amplio. In 2016 IEEE Biennial Congress of Argentina (ARGENCON), pp. 1-6, 2016.

S. I. Arroyo, U. Bussi, F. E. Safar and D. Oliva. A monocular wide-field vision system for geolocation with uncertainties in urban scenes. Engineering Research Express, 2, pp 1-20,2020.

V. B. de Oliveira, R. Barth, Oliveira, M. A. de Oliveira and V. E.. Nascimento. Vehicle speed monitoring using convolutional neural networks. IEEE Latin America Transactions, 17(06), 1000-1008, 2019.

C. H. Chen et al., Heterogeneous fusion of omnidirectional and PTZ cameras for multiple object tracking. IEEE Transactions on Circuits and Systems for Video Technology, 18(8), 1052-1063, 2018.

Y. Bastanlar. A simplified two-view geometry based external calibration method for omnidirectional and PTZ camera pairs. Pattern Recognition Letters, 71, 1-7, 2016.

C. Cai, B. Fan, X. Liang and Q. Zhu,. Automatic Rectification of the Hybrid Stereo Vision System. Sensors, 18(10), 3355, 2018.

ONVIF Contributors. Página oficial onvif. https://www.onvif.org/, June 2020.

ONVIF Contributors. Especificaciones del core. http://www.onvif.org, June 2020.

ONVIF Contributors. ONVIF Application Programmer’s Guide. Addison-Wesley, 2011.

Ltd. Quatanium Co. python-onvif-zeep. https://github.com/FalkTannhaeuser/python-onvif-zeep, September 2018. Github repository licensed under the MIT License, Copyright (c) 2014.

G. Bradski and A. Kaehler. Learning OpenCV: Computer vision with the OpenCV library. O'Reilly Media, Inc., 2019.

J. J. Craig. Introduction to robotics: mechanics and control, 3/E. Pearson Education India, 2009.

P. Corke. Robotics, vision and control: fundamental algorithms in MATLAB® second, completely revised (Vol. 118). Springer, 2017.

S. I. Arroyo. Repositorio github. https://github.com/sebalander /sebaPhD /tree/master/calibration, June 2020.

OpenCV documentation. Fisheye camera model, https://docs.opencv.org /4.4.0, June 2020.

OpenCV documentation. Rodrigues. https://docs.opencv.org/4.4.0, June 2020.

OpenCV documentation. Camera Calibration and 3D Reconstruction. https://docs.opencv.org/4.4.0, June 2020.

P. Virtanen et al., SciPy 1.0: fundamental algorithms for scientific computing in Python. Nature methods, 17(3), 261-272, 2020.

H. Y. Lin, , M. L. Wang. HOPIS: Hybrid omnidirectional and perspective imaging system for mobile robots. Sensors, 14(9), pp. 16508-16531, 2014.

M. Rostkowska, P. Skrzypczyński. Hybrid field of view vision: From biological inspirations to integrated sensor design. In 2016 IEEE International Conference on Multisensor Fusion and Integration for Intelligent Systems (MFI), pp. 629-634, 2016.

VIVOTEC Panoramic PTZ Installation Guide. https://www.vivotek.com/panoramic%20ptz#downloads.

Z. Zhang. A flexible new technique for camera calibration. IEEE Transactions on pattern analysis and machine intelligence, 22(11), 1330-1334, 2000.