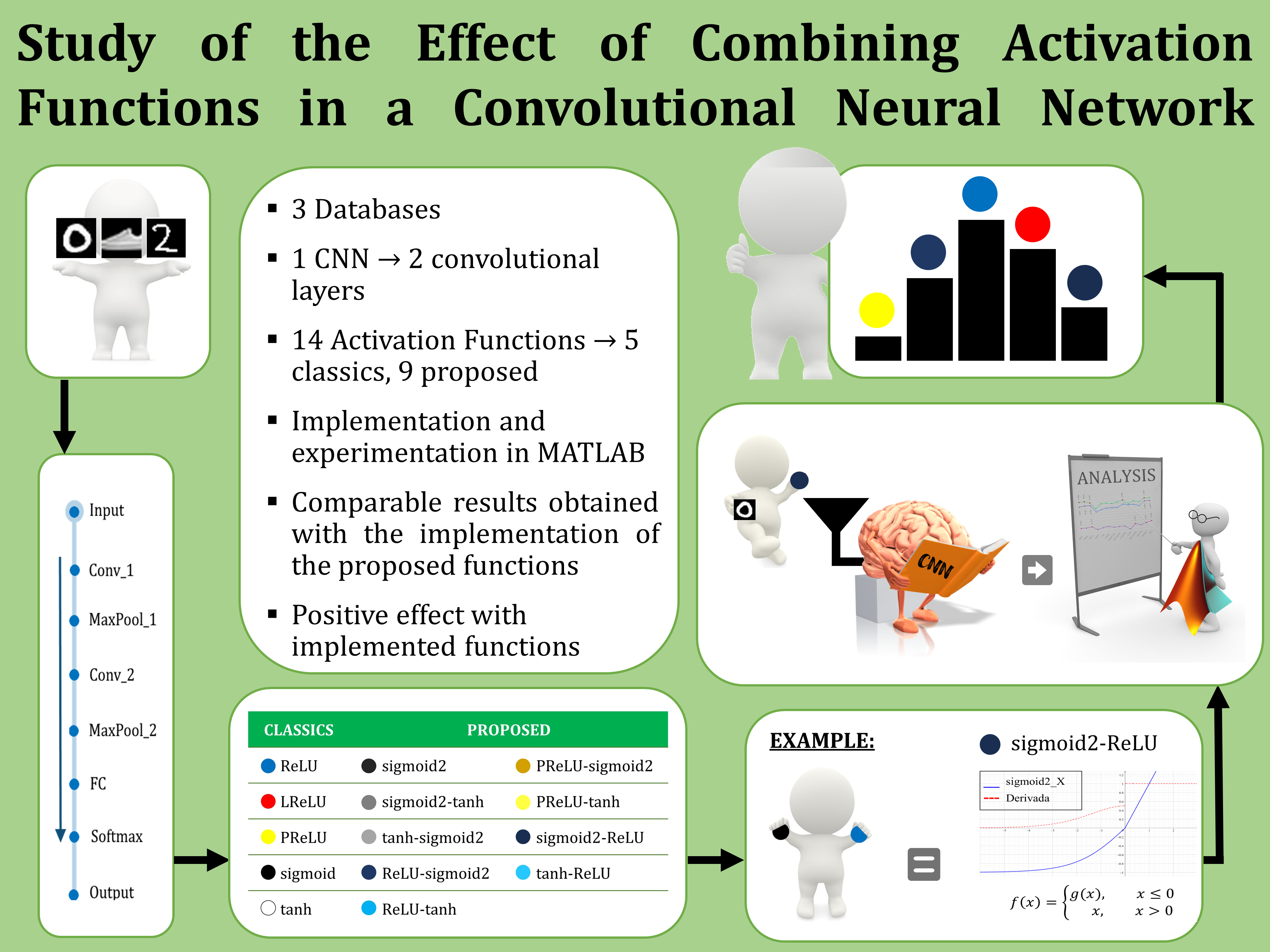

Study of the Effect of Combining Activation Functions in a Convolutional Neural Network

Keywords:

Convolutional Neural Networks, activation functionsAbstract

Convolutional Neural Networks (CNN’s) have proven to be an effective approach for solving image classification problems. The output, the accuracy and the computational efficiency of a CNN are determined mainly by the architecture, the convolutional filters, and the activation functions. Based on the importance of an activation function, in this paper, nine new activation functions based on combinations of classical functions such as ReLU and sigmoid are presented. Also, a study about the effects caused by the activation functions in the performance of a CNN is presented. First, every new function is described, also, their graphs, analytic forms and derivatives are presented. Then, a traditional CNN model with each new activation function is used to classify three 10-class databases: MNIST, Fashion MNIST and a handwritten digit database created by us. Experimental results illustrate that some of the proposed activation functions lead to better performances on classifying than classical activation functions. Moreover, our study demonstrated that the accuracy of a CNN could be increased by 1.18% with the new proposed activation functions.

Downloads