Vision System Prototype for Inspection and Monitoring with a Smart Camera

Keywords:

Artificial Vision, smart camera, Embedded systemsAbstract

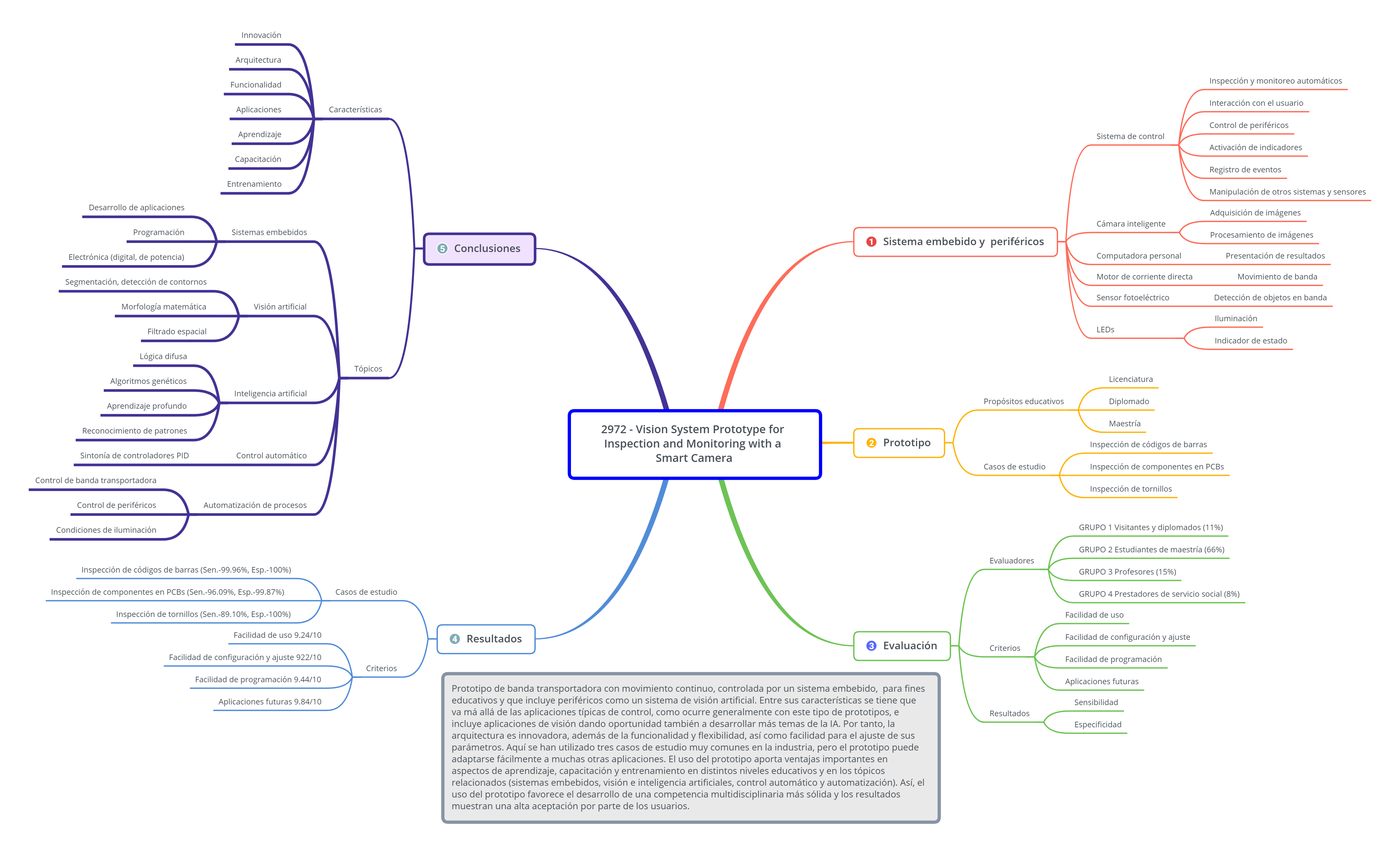

This paper presents the design of an artificial vision system prototype for automatic inspection and monitoring of objects over a conveyor belt and using a Smart camera 2D BOA-INS. The prototype consists of a conveyor belt and an embedded system based on an Arduino Mega card for system control, and it has as main peripherals the smart camera, a direct current motor, a photoelectric sensor, LED illumination and LEDs indicating the status (good or defect) of each evaluated object. The application of the prototype is for educational purposes, so that undergraduate, master and diploma students can simulate a continuous production line, controlled by an embedded system, and perform quality control by monitoring through a visual system and a personal computer. This allows implementing the topics of embedded systems, artificial vision, artificial intelligence, pattern recognition, automatic control, as well as automation of real processes.

Downloads

References

A. Nelson, “When to Use a Vision Sensor: Vision sensors are a especially great choice for users that are getting started with vision,” Quality, 2018 Special Issue, pp. 1-3, Jul, 2018.

C. Chalifoux, “Smart Cameras in a Manufacturing Environment: Today and in the Future: The Combination ff Dramatically Improving Hardware And Modern Software Technology has Made Smart Cameras Capable, Inexpensive, and Easy-To-Use,” Quality, 2018 Vision & Sensors, pp. 6-7, Jan, 2018.

I. C. Palacios-Aguayo and R. Velázquez-Guerrero, “Sistema Automático de Inspección de Componentes Mediante Visión por Computador,” Pistas Educativas, vol. 39, no. 128, pp. 1237-1251, Feb, 2018.

Teledyne Dalsa. (2013, Apr.). BOA. The smart choice in vision. [Online]. Available: https://info.teledynedalsa.com/acton/attachment/14932/f-0265/1/-/-/-/-/Boa_brochure_web.pdf. Accessed on: May 5, 2019.

N. Aliane and S. Bemposta, “Checkers Playing Robot: A Didactic Project,” IEEE Latin America Transactions, vol. 9, no. 5, pp. 821-826, Sept., 2011.

A. Castillo; J. Ortegón; J. Vazquez and J. Rivera, “Virtual Laboratory for Digital Image Processing,” IEEE Latin America Transactions, vol. 12, no. 6, pp. 1176-1181, Sept, 2014.

D. Heras, “Clasificador de imágenes de frutas basado en inteligencia artificial,” Killkana Técnica, vol. 1, no. 2, pp. 21-30, Aug., 2017.

M. A. Aguilar-Torres, A. J. Arguelles-Cruz, C. Yañez-Márquez, “A Real Time Artificial Vision Implementation for Quality Inspection of Industrial Products,” in Proc. CERMA ‘08, Washington, DC, USA, 2008, pp. 277-282

P. Constante, A. Gordon, O. Chang, E. Pruna, F. Acuna, I. Escobar, “Artificial Vision Techniques for Strawberry’s Industrial Classification,” IEEE Latin America Transactions, vol. 14, no. 6, pp. 2576-2581, Aug, 2016.

M. Grimheden and M. Törngren. How should embedded systems be taught? Experiences and snapshots from Swedish higher engineering education. ACM SIGBED Review 2(4):34-39, October 2005.

R.C. Gonzalez and R.E. Woods, Image Processing, Prentice-Hall, 2nd ed. 2002.

L.A. Zadeh, «Fuzzy sets» Information and Control, vol. 8, no 3, pp. 338-353, 1965.

J. H. Holland, Adaption in Natural and Artificial Systems. Ann Arbor, MIT: University of Michigan Press, 2nd editon 1992.

J. Schmidhuber, «Deep learning in neural networks: An overview» Neural Networks, vol. 61, pp 85-117, 2015.

A. F. Sánchez-Aguiar and A. Espinosa Bedoya, “Identificación de Poros en Uniones Soldadas Empleando Técnicas de Visión por Computador,” Ingenierías USBMed, vol. 9, no. 2, pp. 27-33, Aug., 2018.

Teledyne Dalsa. (2015, Nov.). iNspect Express.Tool Application Guide. [Online]. Available: https://www.teledynedalsa.com/en/products/imaging/vision-software/inspect/. Accessed on: May 29, 2019.

A. Wilson, “Smart cameras challenge PC-based systems for embedded applications,” Vision Systems Design, 2015, vol. 20, Issue 7, pp. 1-7, Jul, 2015.

O. Torrente-Artero, “Entradas y Salidas. Uso de las Entradas y Salidas Digitales,” in Arduino. Curso Práctico de Formación, Madrid, Spain, 2013, pp. 349–364.